第一个 Apache 图数据库项目

+首个 Apache 基金会的顶级图项目

第一个 Apache 图数据库项目

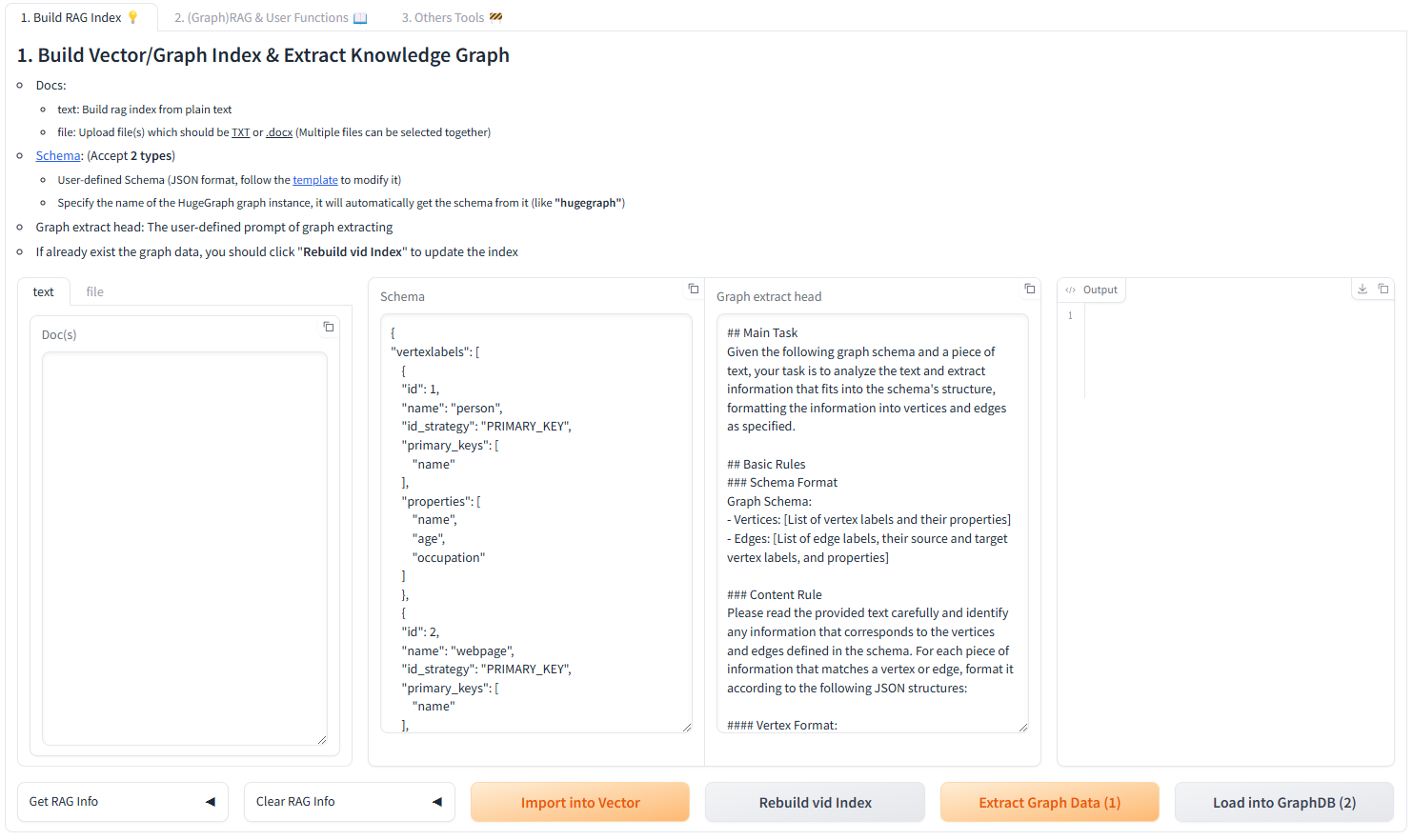

{{< blocks/section >}} {{% blocks/feature icon="far fa-tools" title="使用易用的**工具链**" %}} -可从[此](https://github.com/apache/incubator-hugegraph-toolchain)获取图数据导入工具, 可视化界面以及备份还原迁移工具, 欢迎使用 +可从[此](https://github.com/apache/hugegraph-toolchain)获取图数据导入工具, 可视化界面以及备份还原迁移工具, 欢迎使用 {{% /blocks/feature %}} @@ -72,10 +85,10 @@第一个 Apache 图数据库项目

{{% /blocks/feature %}} -{{% blocks/feature icon="fab fa-weixin" title="关注微信" url="https://twitter.com/apache-hugegraph" %}} -关注微信公众号 "HugeGraph" +{{% blocks/feature icon="fab fa-weixin" title="关注微信" %}} +关注微信公众号 "HugeGraph" 获取最新动态 -(推特正在路上...) +也可以加入 [ASF Slack 频道](https://the-asf.slack.com/archives/C059UU2FJ23)参与讨论 {{% /blocks/feature %}} @@ -84,7 +97,7 @@第一个 Apache 图数据库项目

{{< blocks/section color="blue-light">}}欢迎大家参与 HugeGraph 的任何贡献

+欢迎大家给 HugeGraph 添砖加瓦

K8s 配置项 (可选)

+ +对应配置文件`rest-server.properties` + +| config option | default value | description | +|------------------|-------------------------------|------------------------------------------| +| server.use_k8s | false | Whether to enable K8s multi-tenancy mode. | +| k8s.namespace | hugegraph-computer-system | K8s namespace for compute jobs. | +| k8s.kubeconfig | | Path to kubeconfig file. | + +Arthas 诊断配置项 (可选)

+ +对应配置文件`rest-server.properties` + +| config option | default value | description | +|--------------------|---------------|-----------------------| +| arthas.telnetPort | 8562 | Arthas telnet port. | +| arthas.httpPort | 8561 | Arthas HTTP port. | +| arthas.ip | 0.0.0.0 | Arthas bind IP. | + +RPC Server 配置

+ +| config option | default value | description | +|-----------------------------|-----------------------------------------|---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------| +| rpc.client_connect_timeout | 20 | The timeout(in seconds) of rpc client connect to rpc server. | +| rpc.client_load_balancer | consistentHash | The rpc client uses a load-balancing algorithm to access multiple rpc servers in one cluster. Default value is 'consistentHash', means forwarding by request parameters. | +| rpc.client_read_timeout | 40 | The timeout(in seconds) of rpc client read from rpc server. | +| rpc.client_reconnect_period | 10 | The period(in seconds) of rpc client reconnect to rpc server. | +| rpc.client_retries | 3 | Failed retry number of rpc client calls to rpc server. | +| rpc.config_order | 999 | Sofa rpc configuration file loading order, the larger the more later loading. | +| rpc.logger_impl | com.alipay.sofa.rpc.log.SLF4JLoggerImpl | Sofa rpc log implementation class. | +| rpc.protocol | bolt | Rpc communication protocol, client and server need to be specified the same value. | +| rpc.remote_url | | The remote urls of rpc peers, it can be set to multiple addresses, which are concat by ',', empty value means not enabled. | +| rpc.server_adaptive_port | false | Whether the bound port is adaptive, if it's enabled, when the port is in use, automatically +1 to detect the next available port. Note that this process is not atomic, so there may still be port conflicts. | +| rpc.server_host | | The hosts/ips bound by rpc server to provide services, empty value means not enabled. | +| rpc.server_port | 8090 | The port bound by rpc server to provide services. | +| rpc.server_timeout | 30 | The timeout(in seconds) of rpc server execution. | + +HBase 后端配置项

| config option | default value | description | |---------------------------|--------------------------------|--------------------------------------------------------------------------| @@ -253,7 +264,50 @@ weight: 2 | hbase.vertex_partitions | 10 | The number of partitions of the HBase vertex table. | | hbase.edge_partitions | 30 | The number of partitions of the HBase edge table. | -### MySQL & PostgreSQL 后端配置项 +Cassandra 后端配置项

+ +| config option | default value | description | +|--------------------------------|----------------|------------------------------------------------------------------------------------------------------------------------------------------------| +| backend | | Must be set to `cassandra`. | +| serializer | | Must be set to `cassandra`. | +| cassandra.host | localhost | The seeds hostname or ip address of cassandra cluster. | +| cassandra.port | 9042 | The seeds port address of cassandra cluster. | +| cassandra.connect_timeout | 5 | The cassandra driver connect server timeout(seconds). | +| cassandra.read_timeout | 20 | The cassandra driver read from server timeout(seconds). | +| cassandra.keyspace.strategy | SimpleStrategy | The replication strategy of keyspace, valid value is SimpleStrategy or NetworkTopologyStrategy. | +| cassandra.keyspace.replication | [3] | The keyspace replication factor of SimpleStrategy, like '[3]'.Or replicas in each datacenter of NetworkTopologyStrategy, like '[dc1:2,dc2:1]'. | +| cassandra.username | | The username to use to login to cassandra cluster. | +| cassandra.password | | The password corresponding to cassandra.username. | +| cassandra.compression_type | none | The compression algorithm of cassandra transport: none/snappy/lz4. | +| cassandra.jmx_port=7199 | 7199 | The port of JMX API service for cassandra. | +| cassandra.aggregation_timeout | 43200 | The timeout in seconds of waiting for aggregation. | + +ScyllaDB 后端配置项

+ +| config option | default value | description | +|---------------|---------------|----------------------------| +| backend | | Must be set to `scylladb`. | +| serializer | | Must be set to `scylladb`. | + +其它与 Cassandra 后端一致。 + +MySQL & PostgreSQL 后端配置项

| config option | default value | description | |----------------------------------|-----------------------------|-------------------------------------------------------------------------------------| @@ -269,7 +323,10 @@ weight: 2 | jdbc.storage_engine | InnoDB | The storage engine of backend store database, like InnoDB/MyISAM/RocksDB for MySQL. | | jdbc.postgresql.connect_database | template1 | The database used to connect when init store, drop store or check store exist. | -### PostgreSQL 后端配置项 +PostgreSQL 后端配置项

| config option | default value | description | |---------------|---------------|------------------------------| @@ -281,3 +338,6 @@ weight: 2 > PostgreSQL 后端的 driver 和 url 应该设置为: > - `jdbc.driver=org.postgresql.Driver` > - `jdbc.url=jdbc:postgresql://localhost:5432/` + +-

-

- YYYY-MM-DD New Committer: xxx -

- ... -

旧版本 (非 ASF 版本)

-由于 ASF 规则要求, 不能直接在当前页面存放非 ASF 发行包, 对于 1.0.0 前旧版本 (非 ASF 版本) 的下载说明, 请跳转至 https://github.com/apache/incubator-hugegraph-doc/wiki/Apache-HugeGraph-(Incubating)-Old-Versions-Download - diff --git a/content/cn/docs/quickstart/hugegraph-ai/config-reference.md b/content/cn/docs/quickstart/hugegraph-ai/config-reference.md

new file mode 100644

index 000000000..4172ae12e

--- /dev/null

+++ b/content/cn/docs/quickstart/hugegraph-ai/config-reference.md

@@ -0,0 +1,396 @@

+---

+title: "配置参考"

+linkTitle: "配置参考"

+weight: 4

+---

+

+本文档提供 HugeGraph-LLM 所有配置选项的完整参考。

+

+## 配置文件

+

+- **环境文件**:`.env`(从模板创建或自动生成)

+- **提示词配置**:`src/hugegraph_llm/resources/demo/config_prompt.yaml`

+

+> [!TIP]

+> 运行 `python -m hugegraph_llm.config.generate --update` 可自动生成或更新带有默认值的配置文件。

+

+## 环境变量概览

+

+### 1. 语言和模型类型选择

+

+```bash

+# 提示词语言(影响系统提示词和生成文本)

+LANGUAGE=EN # 选项: EN | CN

+

+# 不同任务的 LLM 类型

+CHAT_LLM_TYPE=openai # 对话/RAG: openai | litellm | ollama/local

+EXTRACT_LLM_TYPE=openai # 实体抽取: openai | litellm | ollama/local

+TEXT2GQL_LLM_TYPE=openai # 文本转 Gremlin: openai | litellm | ollama/local

+

+# 嵌入模型类型

+EMBEDDING_TYPE=openai # 选项: openai | litellm | ollama/local

+

+# Reranker 类型(可选)

+RERANKER_TYPE= # 选项: cohere | siliconflow | (留空表示无)

+```

+

+### 2. OpenAI 配置

+

+每个 LLM 任务(chat、extract、text2gql)都有独立配置:

+

+#### 2.1 Chat LLM(RAG 答案生成)

+

+```bash

+OPENAI_CHAT_API_BASE=https://api.openai.com/v1

+OPENAI_CHAT_API_KEY=sk-your-api-key-here

+OPENAI_CHAT_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_CHAT_TOKENS=8192 # 对话响应的最大 tokens

+```

+

+#### 2.2 Extract LLM(实体和关系抽取)

+

+```bash

+OPENAI_EXTRACT_API_BASE=https://api.openai.com/v1

+OPENAI_EXTRACT_API_KEY=sk-your-api-key-here

+OPENAI_EXTRACT_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_EXTRACT_TOKENS=1024 # 抽取任务的最大 tokens

+```

+

+#### 2.3 Text2GQL LLM(自然语言转 Gremlin)

+

+```bash

+OPENAI_TEXT2GQL_API_BASE=https://api.openai.com/v1

+OPENAI_TEXT2GQL_API_KEY=sk-your-api-key-here

+OPENAI_TEXT2GQL_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_TEXT2GQL_TOKENS=4096 # 查询生成的最大 tokens

+```

+

+#### 2.4 嵌入模型

+

+```bash

+OPENAI_EMBEDDING_API_BASE=https://api.openai.com/v1

+OPENAI_EMBEDDING_API_KEY=sk-your-api-key-here

+OPENAI_EMBEDDING_MODEL=text-embedding-3-small

+```

+

+> [!NOTE]

+> 您可以为每个任务使用不同的 API 密钥/端点,以优化成本或使用专用模型。

+

+### 3. LiteLLM 配置(多供应商支持)

+

+LiteLLM 支持统一访问 100 多个 LLM 供应商(OpenAI、Anthropic、Google、Azure 等)。

+

+#### 3.1 Chat LLM

+

+```bash

+LITELLM_CHAT_API_BASE=http://localhost:4000 # LiteLLM 代理 URL

+LITELLM_CHAT_API_KEY=sk-litellm-key # LiteLLM API 密钥

+LITELLM_CHAT_LANGUAGE_MODEL=anthropic/claude-3-5-sonnet-20241022

+LITELLM_CHAT_TOKENS=8192

+```

+

+#### 3.2 Extract LLM

+

+```bash

+LITELLM_EXTRACT_API_BASE=http://localhost:4000

+LITELLM_EXTRACT_API_KEY=sk-litellm-key

+LITELLM_EXTRACT_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_EXTRACT_TOKENS=256

+```

+

+#### 3.3 Text2GQL LLM

+

+```bash

+LITELLM_TEXT2GQL_API_BASE=http://localhost:4000

+LITELLM_TEXT2GQL_API_KEY=sk-litellm-key

+LITELLM_TEXT2GQL_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_TEXT2GQL_TOKENS=4096

+```

+

+#### 3.4 嵌入模型

+

+```bash

+LITELLM_EMBEDDING_API_BASE=http://localhost:4000

+LITELLM_EMBEDDING_API_KEY=sk-litellm-key

+LITELLM_EMBEDDING_MODEL=openai/text-embedding-3-small

+```

+

+**模型格式**: `供应商/模型名称`

+

+示例:

+- `openai/gpt-4o-mini`

+- `anthropic/claude-3-5-sonnet-20241022`

+- `google/gemini-2.0-flash-exp`

+- `azure/gpt-4`

+

+完整列表请参阅 [LiteLLM Providers](https://docs.litellm.ai/docs/providers)。

+

+### 4. Ollama 配置(本地部署)

+

+使用 Ollama 运行本地 LLM,确保隐私和成本控制。

+

+#### 4.1 Chat LLM

+

+```bash

+OLLAMA_CHAT_HOST=127.0.0.1

+OLLAMA_CHAT_PORT=11434

+OLLAMA_CHAT_LANGUAGE_MODEL=llama3.1:8b

+```

+

+#### 4.2 Extract LLM

+

+```bash

+OLLAMA_EXTRACT_HOST=127.0.0.1

+OLLAMA_EXTRACT_PORT=11434

+OLLAMA_EXTRACT_LANGUAGE_MODEL=llama3.1:8b

+```

+

+#### 4.3 Text2GQL LLM

+

+```bash

+OLLAMA_TEXT2GQL_HOST=127.0.0.1

+OLLAMA_TEXT2GQL_PORT=11434

+OLLAMA_TEXT2GQL_LANGUAGE_MODEL=qwen2.5-coder:7b

+```

+

+#### 4.4 嵌入模型

+

+```bash

+OLLAMA_EMBEDDING_HOST=127.0.0.1

+OLLAMA_EMBEDDING_PORT=11434

+OLLAMA_EMBEDDING_MODEL=nomic-embed-text

+```

+

+> [!TIP]

+> 下载模型:`ollama pull llama3.1:8b` 或 `ollama pull qwen2.5-coder:7b`

+

+### 5. Reranker 配置

+

+Reranker 通过根据相关性重新排序检索结果来提高 RAG 准确性。

+

+#### 5.1 Cohere Reranker

+

+```bash

+RERANKER_TYPE=cohere

+COHERE_BASE_URL=https://api.cohere.com/v1/rerank

+RERANKER_API_KEY=your-cohere-api-key

+RERANKER_MODEL=rerank-english-v3.0

+```

+

+可用模型:

+- `rerank-english-v3.0`(英文)

+- `rerank-multilingual-v3.0`(100+ 种语言)

+

+#### 5.2 SiliconFlow Reranker

+

+```bash

+RERANKER_TYPE=siliconflow

+RERANKER_API_KEY=your-siliconflow-api-key

+RERANKER_MODEL=BAAI/bge-reranker-v2-m3

+```

+

+### 6. HugeGraph 连接

+

+配置与 HugeGraph 服务器实例的连接。

+

+```bash

+# 服务器连接

+GRAPH_IP=127.0.0.1

+GRAPH_PORT=8080

+GRAPH_NAME=hugegraph # 图实例名称

+GRAPH_USER=admin # 用户名

+GRAPH_PWD=admin-password # 密码

+GRAPH_SPACE= # 图空间(可选,用于多租户)

+```

+

+### 7. 查询参数

+

+控制图遍历行为和结果限制。

+

+```bash

+# 图遍历限制

+MAX_GRAPH_PATH=10 # 图查询的最大路径深度

+MAX_GRAPH_ITEMS=30 # 从图中检索的最大项数

+EDGE_LIMIT_PRE_LABEL=8 # 每个标签类型的最大边数

+

+# 属性过滤

+LIMIT_PROPERTY=False # 限制结果中的属性(True/False)

+```

+

+### 8. 向量搜索配置

+

+配置向量相似性搜索参数。

+

+```bash

+# 向量搜索阈值

+VECTOR_DIS_THRESHOLD=0.9 # 最小余弦相似度(0-1,越高越严格)

+TOPK_PER_KEYWORD=1 # 每个提取关键词的 Top-K 结果

+```

+

+### 9. Rerank 配置

+

+```bash

+# Rerank 结果限制

+TOPK_RETURN_RESULTS=20 # 重排序后的 top 结果数

+```

+

+## 配置优先级

+

+系统按以下顺序加载配置(后面的来源覆盖前面的):

+

+1. **默认值**(在 `*_config.py` 文件中)

+2. **环境变量**(来自 `.env` 文件)

+3. **运行时更新**(通过 Web UI 或 API 调用)

+

+## 配置示例

+

+### 最小配置(OpenAI)

+

+```bash

+# 语言

+LANGUAGE=EN

+

+# LLM 类型

+CHAT_LLM_TYPE=openai

+EXTRACT_LLM_TYPE=openai

+TEXT2GQL_LLM_TYPE=openai

+EMBEDDING_TYPE=openai

+

+# OpenAI 凭据(所有任务共用一个密钥)

+OPENAI_API_BASE=https://api.openai.com/v1

+OPENAI_API_KEY=sk-your-api-key-here

+OPENAI_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_EMBEDDING_MODEL=text-embedding-3-small

+

+# HugeGraph 连接

+GRAPH_IP=127.0.0.1

+GRAPH_PORT=8080

+GRAPH_NAME=hugegraph

+GRAPH_USER=admin

+GRAPH_PWD=admin

+```

+

+### 生产环境配置(LiteLLM + Reranker)

+

+```bash

+# 双语支持

+LANGUAGE=EN

+

+# 灵活使用 LiteLLM

+CHAT_LLM_TYPE=litellm

+EXTRACT_LLM_TYPE=litellm

+TEXT2GQL_LLM_TYPE=litellm

+EMBEDDING_TYPE=litellm

+

+# LiteLLM 代理

+LITELLM_CHAT_API_BASE=http://localhost:4000

+LITELLM_CHAT_API_KEY=sk-litellm-master-key

+LITELLM_CHAT_LANGUAGE_MODEL=anthropic/claude-3-5-sonnet-20241022

+LITELLM_CHAT_TOKENS=8192

+

+LITELLM_EXTRACT_API_BASE=http://localhost:4000

+LITELLM_EXTRACT_API_KEY=sk-litellm-master-key

+LITELLM_EXTRACT_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_EXTRACT_TOKENS=256

+

+LITELLM_TEXT2GQL_API_BASE=http://localhost:4000

+LITELLM_TEXT2GQL_API_KEY=sk-litellm-master-key

+LITELLM_TEXT2GQL_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_TEXT2GQL_TOKENS=4096

+

+LITELLM_EMBEDDING_API_BASE=http://localhost:4000

+LITELLM_EMBEDDING_API_KEY=sk-litellm-master-key

+LITELLM_EMBEDDING_MODEL=openai/text-embedding-3-small

+

+# Cohere Reranker 提高准确性

+RERANKER_TYPE=cohere

+COHERE_BASE_URL=https://api.cohere.com/v1/rerank

+RERANKER_API_KEY=your-cohere-key

+RERANKER_MODEL=rerank-multilingual-v3.0

+

+# 带认证的 HugeGraph

+GRAPH_IP=prod-hugegraph.example.com

+GRAPH_PORT=8080

+GRAPH_NAME=production_graph

+GRAPH_USER=rag_user

+GRAPH_PWD=secure-password

+GRAPH_SPACE=prod_space

+

+# 优化的查询参数

+MAX_GRAPH_PATH=15

+MAX_GRAPH_ITEMS=50

+VECTOR_DIS_THRESHOLD=0.85

+TOPK_RETURN_RESULTS=30

+```

+

+### 本地/离线配置(Ollama)

+

+```bash

+# 语言

+LANGUAGE=EN

+

+# 全部通过 Ollama 使用本地模型

+CHAT_LLM_TYPE=ollama/local

+EXTRACT_LLM_TYPE=ollama/local

+TEXT2GQL_LLM_TYPE=ollama/local

+EMBEDDING_TYPE=ollama/local

+

+# Ollama 端点

+OLLAMA_CHAT_HOST=127.0.0.1

+OLLAMA_CHAT_PORT=11434

+OLLAMA_CHAT_LANGUAGE_MODEL=llama3.1:8b

+

+OLLAMA_EXTRACT_HOST=127.0.0.1

+OLLAMA_EXTRACT_PORT=11434

+OLLAMA_EXTRACT_LANGUAGE_MODEL=llama3.1:8b

+

+OLLAMA_TEXT2GQL_HOST=127.0.0.1

+OLLAMA_TEXT2GQL_PORT=11434

+OLLAMA_TEXT2GQL_LANGUAGE_MODEL=qwen2.5-coder:7b

+

+OLLAMA_EMBEDDING_HOST=127.0.0.1

+OLLAMA_EMBEDDING_PORT=11434

+OLLAMA_EMBEDDING_MODEL=nomic-embed-text

+

+# 离线环境不使用 reranker

+RERANKER_TYPE=

+

+# 本地 HugeGraph

+GRAPH_IP=127.0.0.1

+GRAPH_PORT=8080

+GRAPH_NAME=hugegraph

+GRAPH_USER=admin

+GRAPH_PWD=admin

+```

+

+## 配置验证

+

+修改 `.env` 后,验证配置:

+

+1. **通过 Web UI**:访问 `http://localhost:8001` 并检查设置面板

+2. **通过 Python**:

+```python

+from hugegraph_llm.config import settings

+print(settings.llm_config)

+print(settings.hugegraph_config)

+```

+3. **通过 REST API**:

+```bash

+curl http://localhost:8001/config

+```

+

+## 故障排除

+

+| 问题 | 解决方案 |

+|------|---------|

+| "API key not found" | 检查 `.env` 中的 `*_API_KEY` 是否正确设置 |

+| "Connection refused" | 验证 `GRAPH_IP` 和 `GRAPH_PORT` 是否正确 |

+| "Model not found" | 对于 Ollama:运行 `ollama pull <模型名称>` |

+| "Rate limit exceeded" | 减少 `MAX_GRAPH_ITEMS` 或使用不同的 API 密钥 |

+| "Embedding dimension mismatch" | 删除现有向量并使用正确模型重建 |

+

+## 另见

+

+- [HugeGraph-LLM 概述](./hugegraph-llm.md)

+- [REST API 参考](./rest-api.md)

+- [快速入门指南](./quick_start.md)

diff --git a/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md

index b353a8fba..d376b3de1 100644

--- a/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md

+++ b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md

@@ -4,11 +4,11 @@ linkTitle: "HugeGraph-LLM"

weight: 1

---

-> 本文为中文翻译版本,内容基于英文版进行,我们欢迎您随时提出修改建议。我们推荐您阅读 [AI 仓库 README](https://github.com/apache/incubator-hugegraph-ai/tree/main/hugegraph-llm#readme) 以获取最新信息,官网会定期同步更新。

+> 本文为中文翻译版本,内容基于英文版进行,我们欢迎您随时提出修改建议。我们推荐您阅读 [AI 仓库 README](https://github.com/apache/hugegraph-ai/tree/main/hugegraph-llm#readme) 以获取最新信息,官网会定期同步更新。

> **连接图数据库与大语言模型的桥梁**

-> AI 总结项目文档:[](https://deepwiki.com/apache/incubator-hugegraph-ai)

+> AI 总结项目文档:[](https://deepwiki.com/apache/hugegraph-ai)

## 🎯 概述

@@ -19,7 +19,7 @@ HugeGraph-LLM 是一个功能强大的工具包,它融合了图数据库和大

- 🗣️ **自然语言查询**:通过自然语言(Gremlin/Cypher)操作图数据库。

- 🔍 **图增强 RAG**:借助知识图谱提升问答准确性(GraphRAG 和 Graph Agent)。

-更多源码文档,请访问我们的 [DeepWiki](https://deepwiki.com/apache/incubator-hugegraph-ai) 页面(推荐)。

+更多源码文档,请访问我们的 [DeepWiki](https://deepwiki.com/apache/hugegraph-ai) 页面(推荐)。

## 📋 环境要求

@@ -90,8 +90,8 @@ docker run -itd --name=server -p 8080:8080 hugegraph/hugegraph

curl -LsSf https://astral.sh/uv/install.sh | sh

# 3. 克隆并设置项目

-git clone https://github.com/apache/incubator-hugegraph-ai.git

-cd incubator-hugegraph-ai/hugegraph-llm

+git clone https://github.com/apache/hugegraph-ai.git

+cd hugegraph-ai/hugegraph-llm

# 4. 创建虚拟环境并安装依赖

uv venv && source .venv/bin/activate

@@ -116,7 +116,7 @@ python -m hugegraph_llm.config.generate --update

```

> [!TIP]

-> 查看我们的[快速入门指南](https://github.com/apache/incubator-hugegraph-ai/blob/main/hugegraph-llm/quick_start.md)获取详细用法示例和查询逻辑解释。

+> 查看我们的[快速入门指南](https://github.com/apache/hugegraph-ai/blob/main/hugegraph-llm/quick_start.md)获取详细用法示例和查询逻辑解释。

## 💡 用法示例

@@ -131,7 +131,7 @@ python -m hugegraph_llm.config.generate --update

- **文件**:上传 TXT 或 DOCX 文件(支持多选)

**Schema 配置:**

-- **自定义 Schema**:遵循我们[模板](https://github.com/apache/incubator-hugegraph-ai/blob/aff3bbe25fa91c3414947a196131be812c20ef11/hugegraph-llm/src/hugegraph_llm/config/config_data.py#L125)的 JSON 格式

+- **自定义 Schema**:遵循我们[模板](https://github.com/apache/hugegraph-ai/blob/aff3bbe25fa91c3414947a196131be812c20ef11/hugegraph-llm/src/hugegraph_llm/config/config_data.py#L125)的 JSON 格式

- **HugeGraph Schema**:使用现有图实例的 Schema(例如,“hugegraph”)

@@ -214,7 +214,7 @@ graph TD

## 🔧 配置

-运行演示后,将自动生成配置文件:

+运行演示后,将自动生成配置文件:

- **环境**:`hugegraph-llm/.env`

- **提示**:`hugegraph-llm/src/hugegraph_llm/resources/demo/config_prompt.yaml`

@@ -222,7 +222,80 @@ graph TD

> [!NOTE]

> 使用 Web 界面时,配置更改会自动保存。对于手动更改,刷新页面即可加载更新。

-**LLM 提供商支持**:本项目使用 [LiteLLM](https://docs.litellm.ai/docs/providers) 实现多提供商 LLM 支持。

+### LLM 提供商配置

+

+本项目使用 [LiteLLM](https://docs.litellm.ai/docs/providers) 实现多提供商 LLM 支持,可统一访问 OpenAI、Anthropic、Google、Cohere 以及 100 多个其他提供商。

+

+#### 方案一:直接 LLM 连接(OpenAI、Ollama)

+

+```bash

+# .env 配置

+chat_llm_type=openai # 或 ollama/local

+openai_api_key=sk-xxx

+openai_api_base=https://api.openai.com/v1

+openai_language_model=gpt-4o-mini

+openai_max_tokens=4096

+```

+

+#### 方案二:LiteLLM 多提供商支持

+

+LiteLLM 作为多个 LLM 提供商的统一代理:

+

+```bash

+# .env 配置

+chat_llm_type=litellm

+extract_llm_type=litellm

+text2gql_llm_type=litellm

+

+# LiteLLM 设置

+litellm_api_base=http://localhost:4000 # LiteLLM 代理服务器

+litellm_api_key=sk-1234 # LiteLLM API 密钥

+

+# 模型选择(提供商/模型格式)

+litellm_language_model=anthropic/claude-3-5-sonnet-20241022

+litellm_max_tokens=4096

+```

+

+**支持的提供商**:OpenAI、Anthropic、Google(Gemini)、Azure、Cohere、Bedrock、Vertex AI、Hugging Face 等。

+

+完整提供商列表和配置详情,请访问 [LiteLLM Providers](https://docs.litellm.ai/docs/providers)。

+

+### Reranker 配置

+

+Reranker 通过重新排序检索结果来提高 RAG 准确性。支持的提供商:

+

+```bash

+# Cohere Reranker

+reranker_type=cohere

+cohere_api_key=your-cohere-key

+cohere_rerank_model=rerank-english-v3.0

+

+# SiliconFlow Reranker

+reranker_type=siliconflow

+siliconflow_api_key=your-siliconflow-key

+siliconflow_rerank_model=BAAI/bge-reranker-v2-m3

+```

+

+### Text2Gremlin 配置

+

+将自然语言转换为 Gremlin 查询:

+

+```python

+from hugegraph_llm.operators.graph_rag_task import Text2GremlinPipeline

+

+# 初始化工作流

+text2gremlin = Text2GremlinPipeline()

+

+# 生成 Gremlin 查询

+result = (

+ text2gremlin

+ .query_to_gremlin(query="查找所有由 Francis Ford Coppola 执导的电影")

+ .execute_gremlin_query()

+ .run()

+)

+```

+

+**REST API 端点**:有关 HTTP 端点详情,请参阅 [REST API 文档](./rest-api.md)。

## 📚 其他资源

diff --git a/content/cn/docs/quickstart/hugegraph-ai/hugegraph-ml.md b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-ml.md

new file mode 100644

index 000000000..baf0481f0

--- /dev/null

+++ b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-ml.md

@@ -0,0 +1,289 @@

+---

+title: "HugeGraph-ML"

+linkTitle: "HugeGraph-ML"

+weight: 2

+---

+

+HugeGraph-ML 将 HugeGraph 与流行的图学习库集成,支持直接在图数据上进行端到端的机器学习工作流。

+

+## 概述

+

+`hugegraph-ml` 提供了统一接口,用于将图神经网络和机器学习算法应用于存储在 HugeGraph 中的数据。它通过无缝转换 HugeGraph 数据到主流 ML 框架兼容格式,消除了复杂的数据导出/导入流程。

+

+### 核心功能

+

+- **直接 HugeGraph 集成**:无需手动导出即可直接从 HugeGraph 查询图数据

+- **21 种算法实现**:全面覆盖节点分类、图分类、嵌入和链接预测

+- **DGL 后端**:利用深度图库(DGL)进行高效训练

+- **端到端工作流**:从数据加载到模型训练和评估

+- **模块化任务**:可复用的常见 ML 场景任务抽象

+

+## 环境要求

+

+- **Python**:3.9+(独立模块)

+- **HugeGraph Server**:1.0+(推荐:1.5+)

+- **UV 包管理器**:0.7+(用于依赖管理)

+

+## 安装

+

+### 1. 启动 HugeGraph Server

+

+```bash

+# 方案一:Docker(推荐)

+docker run -itd --name=hugegraph -p 8080:8080 hugegraph/hugegraph

+

+# 方案二:二进制包

+# 参见 https://hugegraph.apache.org/docs/download/download/

+```

+

+### 2. 克隆并设置

+

+```bash

+git clone https://github.com/apache/hugegraph-ai.git

+cd hugegraph-ai/hugegraph-ml

+```

+

+### 3. 安装依赖

+

+```bash

+# uv sync 自动创建 .venv 并安装所有依赖

+uv sync

+

+# 激活虚拟环境

+source .venv/bin/activate

+```

+

+### 4. 导航到源代码目录

+

+```bash

+cd ./src

+```

+

+> [!NOTE]

+> 所有示例均假定您在已激活的虚拟环境中。

+

+## 已实现算法

+

+HugeGraph-ML 目前实现了跨多个类别的 **21 种图机器学习算法**:

+

+### 节点分类(11 种算法)

+

+基于网络结构和特征预测图节点的标签。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **GCN** | [Kipf & Welling, 2017](https://arxiv.org/abs/1609.02907) | 图卷积网络 |

+| **GAT** | [Veličković et al., 2018](https://arxiv.org/abs/1710.10903) | 图注意力网络 |

+| **GraphSAGE** | [Hamilton et al., 2017](https://arxiv.org/abs/1706.02216) | 归纳式表示学习 |

+| **APPNP** | [Klicpera et al., 2019](https://arxiv.org/abs/1810.05997) | 个性化 PageRank 传播 |

+| **AGNN** | [Thekumparampil et al., 2018](https://arxiv.org/abs/1803.03735) | 基于注意力的 GNN |

+| **ARMA** | [Bianchi et al., 2019](https://arxiv.org/abs/1901.01343) | 自回归移动平均滤波器 |

+| **DAGNN** | [Liu et al., 2020](https://arxiv.org/abs/2007.09296) | 深度自适应图神经网络 |

+| **DeeperGCN** | [Li et al., 2020](https://arxiv.org/abs/2006.07739) | 非常深的 GCN 架构 |

+| **GRAND** | [Feng et al., 2020](https://arxiv.org/abs/2005.11079) | 图随机神经网络 |

+| **JKNet** | [Xu et al., 2018](https://arxiv.org/abs/1806.03536) | 跳跃知识网络 |

+| **Cluster-GCN** | [Chiang et al., 2019](https://arxiv.org/abs/1905.07953) | 通过聚类实现可扩展 GCN 训练 |

+

+### 图分类(2 种算法)

+

+基于结构和节点特征对整个图进行分类。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **DiffPool** | [Ying et al., 2018](https://arxiv.org/abs/1806.08804) | 可微分图池化 |

+| **GIN** | [Xu et al., 2019](https://arxiv.org/abs/1810.00826) | 图同构网络 |

+

+### 图嵌入(3 种算法)

+

+学习用于下游任务的无监督节点表示。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **DGI** | [Veličković et al., 2019](https://arxiv.org/abs/1809.10341) | 深度图信息最大化(对比学习) |

+| **BGRL** | [Thakoor et al., 2021](https://arxiv.org/abs/2102.06514) | 自举图表示学习 |

+| **GRACE** | [Zhu et al., 2020](https://arxiv.org/abs/2006.04131) | 图对比学习 |

+

+### 链接预测(3 种算法)

+

+预测图中缺失或未来的连接。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **SEAL** | [Zhang & Chen, 2018](https://arxiv.org/abs/1802.09691) | 子图提取和标注 |

+| **P-GNN** | [You et al., 2019](http://proceedings.mlr.press/v97/you19b/you19b.pdf) | 位置感知 GNN |

+| **GATNE** | [Cen et al., 2019](https://arxiv.org/abs/1905.01669) | 属性多元异构网络嵌入 |

+

+### 欺诈检测(2 种算法)

+

+检测图中的异常节点(例如欺诈账户)。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **CARE-GNN** | [Dou et al., 2020](https://arxiv.org/abs/2008.08692) | 抗伪装 GNN |

+| **BGNN** | [Zheng et al., 2021](https://arxiv.org/abs/2101.08543) | 二部图神经网络 |

+

+### 后处理(1 种算法)

+

+通过标签传播改进预测。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **C&S** | [Huang et al., 2020](https://arxiv.org/abs/2010.13993) | 校正与平滑(预测优化) |

+

+## 使用示例

+

+### 示例 1:使用 DGI 进行节点嵌入

+

+使用深度图信息最大化(DGI)在 Cora 数据集上进行无监督节点嵌入。

+

+#### 步骤 1:导入数据集(如需)

+

+```python

+from hugegraph_ml.utils.dgl2hugegraph_utils import import_graph_from_dgl

+

+# 从 DGL 导入 Cora 数据集到 HugeGraph

+import_graph_from_dgl("cora")

+```

+

+#### 步骤 2:转换图数据

+

+```python

+from hugegraph_ml.data.hugegraph2dgl import HugeGraph2DGL

+

+# 将 HugeGraph 数据转换为 DGL 格式

+hg2d = HugeGraph2DGL()

+graph = hg2d.convert_graph(vertex_label="CORA_vertex", edge_label="CORA_edge")

+```

+

+#### 步骤 3:初始化模型

+

+```python

+from hugegraph_ml.models.dgi import DGI

+

+# 创建 DGI 模型

+model = DGI(n_in_feats=graph.ndata["feat"].shape[1])

+```

+

+#### 步骤 4:训练并生成嵌入

+

+```python

+from hugegraph_ml.tasks.node_embed import NodeEmbed

+

+# 训练模型并生成节点嵌入

+node_embed_task = NodeEmbed(graph=graph, model=model)

+embedded_graph = node_embed_task.train_and_embed(

+ add_self_loop=True,

+ n_epochs=300,

+ patience=30

+)

+```

+

+#### 步骤 5:下游任务(节点分类)

+

+```python

+from hugegraph_ml.models.mlp import MLPClassifier

+from hugegraph_ml.tasks.node_classify import NodeClassify

+

+# 使用嵌入进行节点分类

+model = MLPClassifier(

+ n_in_feat=embedded_graph.ndata["feat"].shape[1],

+ n_out_feat=embedded_graph.ndata["label"].unique().shape[0]

+)

+node_clf_task = NodeClassify(graph=embedded_graph, model=model)

+node_clf_task.train(lr=1e-3, n_epochs=400, patience=40)

+print(node_clf_task.evaluate())

+```

+

+**预期输出:**

+```python

+{'accuracy': 0.82, 'loss': 0.5714246034622192}

+```

+

+**完整示例**:参见 [dgi_example.py](https://github.com/apache/hugegraph-ai/blob/main/hugegraph-ml/src/hugegraph_ml/examples/dgi_example.py)

+

+### 示例 2:使用 GRAND 进行节点分类

+

+使用 GRAND 模型直接对节点进行分类(无需单独的嵌入步骤)。

+

+```python

+from hugegraph_ml.data.hugegraph2dgl import HugeGraph2DGL

+from hugegraph_ml.models.grand import GRAND

+from hugegraph_ml.tasks.node_classify import NodeClassify

+

+# 加载图

+hg2d = HugeGraph2DGL()

+graph = hg2d.convert_graph(vertex_label="CORA_vertex", edge_label="CORA_edge")

+

+# 初始化 GRAND 模型

+model = GRAND(

+ n_in_feats=graph.ndata["feat"].shape[1],

+ n_out_feats=graph.ndata["label"].unique().shape[0]

+)

+

+# 训练和评估

+node_clf_task = NodeClassify(graph=graph, model=model)

+node_clf_task.train(lr=1e-2, n_epochs=1500, patience=100)

+print(node_clf_task.evaluate())

+```

+

+**完整示例**:参见 [grand_example.py](https://github.com/apache/hugegraph-ai/blob/main/hugegraph-ml/src/hugegraph_ml/examples/grand_example.py)

+

+## 核心组件

+

+### HugeGraph2DGL 转换器

+

+无缝将 HugeGraph 数据转换为 DGL 图格式:

+

+```python

+from hugegraph_ml.data.hugegraph2dgl import HugeGraph2DGL

+

+hg2d = HugeGraph2DGL()

+graph = hg2d.convert_graph(

+ vertex_label="person", # 要提取的顶点标签

+ edge_label="knows", # 要提取的边标签

+ directed=False # 图的方向性

+)

+```

+

+### 任务抽象

+

+用于常见 ML 工作流的可复用任务对象:

+

+| 任务 | 类 | 用途 |

+|-----|-----|------|

+| 节点嵌入 | `NodeEmbed` | 生成无监督节点嵌入 |

+| 节点分类 | `NodeClassify` | 预测节点标签 |

+| 图分类 | `GraphClassify` | 预测图级标签 |

+| 链接预测 | `LinkPredict` | 预测缺失边 |

+

+## 最佳实践

+

+1. **从小数据集开始**:在扩展之前先在小图(例如 Cora、Citeseer)上测试您的流程

+2. **使用早停**:设置 `patience` 参数以避免过拟合

+3. **调整超参数**:根据数据集大小调整学习率、隐藏维度和周期数

+4. **监控 GPU 内存**:大图可能需要批量训练(例如 Cluster-GCN)

+5. **验证 Schema**:确保顶点/边标签与您的 HugeGraph schema 匹配

+

+## 故障排除

+

+| 问题 | 解决方案 |

+|-----|---------|

+| 连接 HugeGraph "Connection refused" | 验证服务器是否在 8080 端口运行 |

+| CUDA 内存不足 | 减少批大小或使用仅 CPU 模式 |

+| 模型收敛问题 | 尝试不同的学习率(1e-2、1e-3、1e-4) |

+| DGL 的 ImportError | 运行 `uv sync` 重新安装依赖 |

+

+## 贡献

+

+添加新算法:

+

+1. 在 `src/hugegraph_ml/models/your_model.py` 创建模型文件

+2. 继承基础模型类并实现 `forward()` 方法

+3. 在 `src/hugegraph_ml/examples/` 添加示例脚本

+4. 更新此文档并添加算法详情

+

+## 另见

+

+- [HugeGraph-AI 概述](../_index.md) - 完整 AI 生态系统

+- [HugeGraph-LLM](./hugegraph-llm.md) - RAG 和知识图谱构建

+- [GitHub 仓库](https://github.com/apache/hugegraph-ai/tree/main/hugegraph-ml) - 源代码和示例

diff --git a/content/cn/docs/quickstart/hugegraph-ai/quick_start.md b/content/cn/docs/quickstart/hugegraph-ai/quick_start.md

index 6d8d22f90..da148f7e7 100644

--- a/content/cn/docs/quickstart/hugegraph-ai/quick_start.md

+++ b/content/cn/docs/quickstart/hugegraph-ai/quick_start.md

@@ -190,3 +190,63 @@ graph TD;

# 5. 图工具

输入 Gremlin 查询以执行相应操作。

+

+# 6. 语言切换 (v1.5.0+)

+

+HugeGraph-LLM 支持双语提示词,以提高跨语言的准确性。

+

+### 在英文和中文之间切换

+

+系统语言影响:

+- **系统提示词**:LLM 使用的内部提示词

+- **关键词提取**:特定语言的提取逻辑

+- **答案生成**:响应格式和风格

+

+#### 配置方法一:环境变量

+

+编辑您的 `.env` 文件:

+

+```bash

+# 英文提示词(默认)

+LANGUAGE=EN

+

+# 中文提示词

+LANGUAGE=CN

+```

+

+更改语言设置后重启服务。

+

+#### 配置方法二:Web UI(动态)

+

+如果您的部署中可用,使用 Web UI 中的设置面板切换语言,无需重启:

+

+1. 导航到**设置**或**配置**选项卡

+2. 选择**语言**:`EN` 或 `CN`

+3. 点击**保存** - 更改立即生效

+

+#### 特定语言的行为

+

+| 语言 | 关键词提取 | 答案风格 | 使用场景 |

+|-----|-----------|---------|---------|

+| `EN` | 英文 NLP 模型 | 专业、简洁 | 国际用户、英文文档 |

+| `CN` | 中文 NLP 模型 | 自然的中文表达 | 中文用户、中文文档 |

+

+> [!TIP]

+> 将 `LANGUAGE` 设置与您的主要文档语言匹配,以获得最佳 RAG 准确性。

+

+### REST API 语言覆盖

+

+使用 REST API 时,您可以为每个请求指定自定义提示词,以覆盖默认语言设置:

+

+```bash

+curl -X POST http://localhost:8001/rag \

+ -H "Content-Type: application/json" \

+ -d '{

+ "query": "告诉我关于阿尔·帕西诺的信息",

+ "graph_only": true,

+ "keywords_extract_prompt": "请从以下文本中提取关键实体...",

+ "answer_prompt": "请根据以下上下文回答问题..."

+ }'

+```

+

+完整参数详情请参阅 [REST API 参考](./rest-api.md)。

diff --git a/content/cn/docs/quickstart/hugegraph-ai/rest-api.md b/content/cn/docs/quickstart/hugegraph-ai/rest-api.md

new file mode 100644

index 000000000..349ff4c06

--- /dev/null

+++ b/content/cn/docs/quickstart/hugegraph-ai/rest-api.md

@@ -0,0 +1,428 @@

+---

+title: "REST API 参考"

+linkTitle: "REST API"

+weight: 5

+---

+

+HugeGraph-LLM 提供 REST API 端点,用于将 RAG 和 Text2Gremlin 功能集成到您的应用程序中。

+

+## 基础 URL

+

+```

+http://localhost:8001

+```

+

+启动服务时更改主机/端口:

+```bash

+python -m hugegraph_llm.demo.rag_demo.app --host 127.0.0.1 --port 8001

+```

+

+## 认证

+

+目前 API 支持可选的基于令牌的认证:

+

+```bash

+# 在 .env 中启用认证

+ENABLE_LOGIN=true

+USER_TOKEN=your-user-token

+ADMIN_TOKEN=your-admin-token

+```

+

+在请求头中传递令牌:

+```bash

+Authorization: Bearer

diff --git a/content/cn/docs/quickstart/hugegraph-ai/config-reference.md b/content/cn/docs/quickstart/hugegraph-ai/config-reference.md

new file mode 100644

index 000000000..4172ae12e

--- /dev/null

+++ b/content/cn/docs/quickstart/hugegraph-ai/config-reference.md

@@ -0,0 +1,396 @@

+---

+title: "配置参考"

+linkTitle: "配置参考"

+weight: 4

+---

+

+本文档提供 HugeGraph-LLM 所有配置选项的完整参考。

+

+## 配置文件

+

+- **环境文件**:`.env`(从模板创建或自动生成)

+- **提示词配置**:`src/hugegraph_llm/resources/demo/config_prompt.yaml`

+

+> [!TIP]

+> 运行 `python -m hugegraph_llm.config.generate --update` 可自动生成或更新带有默认值的配置文件。

+

+## 环境变量概览

+

+### 1. 语言和模型类型选择

+

+```bash

+# 提示词语言(影响系统提示词和生成文本)

+LANGUAGE=EN # 选项: EN | CN

+

+# 不同任务的 LLM 类型

+CHAT_LLM_TYPE=openai # 对话/RAG: openai | litellm | ollama/local

+EXTRACT_LLM_TYPE=openai # 实体抽取: openai | litellm | ollama/local

+TEXT2GQL_LLM_TYPE=openai # 文本转 Gremlin: openai | litellm | ollama/local

+

+# 嵌入模型类型

+EMBEDDING_TYPE=openai # 选项: openai | litellm | ollama/local

+

+# Reranker 类型(可选)

+RERANKER_TYPE= # 选项: cohere | siliconflow | (留空表示无)

+```

+

+### 2. OpenAI 配置

+

+每个 LLM 任务(chat、extract、text2gql)都有独立配置:

+

+#### 2.1 Chat LLM(RAG 答案生成)

+

+```bash

+OPENAI_CHAT_API_BASE=https://api.openai.com/v1

+OPENAI_CHAT_API_KEY=sk-your-api-key-here

+OPENAI_CHAT_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_CHAT_TOKENS=8192 # 对话响应的最大 tokens

+```

+

+#### 2.2 Extract LLM(实体和关系抽取)

+

+```bash

+OPENAI_EXTRACT_API_BASE=https://api.openai.com/v1

+OPENAI_EXTRACT_API_KEY=sk-your-api-key-here

+OPENAI_EXTRACT_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_EXTRACT_TOKENS=1024 # 抽取任务的最大 tokens

+```

+

+#### 2.3 Text2GQL LLM(自然语言转 Gremlin)

+

+```bash

+OPENAI_TEXT2GQL_API_BASE=https://api.openai.com/v1

+OPENAI_TEXT2GQL_API_KEY=sk-your-api-key-here

+OPENAI_TEXT2GQL_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_TEXT2GQL_TOKENS=4096 # 查询生成的最大 tokens

+```

+

+#### 2.4 嵌入模型

+

+```bash

+OPENAI_EMBEDDING_API_BASE=https://api.openai.com/v1

+OPENAI_EMBEDDING_API_KEY=sk-your-api-key-here

+OPENAI_EMBEDDING_MODEL=text-embedding-3-small

+```

+

+> [!NOTE]

+> 您可以为每个任务使用不同的 API 密钥/端点,以优化成本或使用专用模型。

+

+### 3. LiteLLM 配置(多供应商支持)

+

+LiteLLM 支持统一访问 100 多个 LLM 供应商(OpenAI、Anthropic、Google、Azure 等)。

+

+#### 3.1 Chat LLM

+

+```bash

+LITELLM_CHAT_API_BASE=http://localhost:4000 # LiteLLM 代理 URL

+LITELLM_CHAT_API_KEY=sk-litellm-key # LiteLLM API 密钥

+LITELLM_CHAT_LANGUAGE_MODEL=anthropic/claude-3-5-sonnet-20241022

+LITELLM_CHAT_TOKENS=8192

+```

+

+#### 3.2 Extract LLM

+

+```bash

+LITELLM_EXTRACT_API_BASE=http://localhost:4000

+LITELLM_EXTRACT_API_KEY=sk-litellm-key

+LITELLM_EXTRACT_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_EXTRACT_TOKENS=256

+```

+

+#### 3.3 Text2GQL LLM

+

+```bash

+LITELLM_TEXT2GQL_API_BASE=http://localhost:4000

+LITELLM_TEXT2GQL_API_KEY=sk-litellm-key

+LITELLM_TEXT2GQL_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_TEXT2GQL_TOKENS=4096

+```

+

+#### 3.4 嵌入模型

+

+```bash

+LITELLM_EMBEDDING_API_BASE=http://localhost:4000

+LITELLM_EMBEDDING_API_KEY=sk-litellm-key

+LITELLM_EMBEDDING_MODEL=openai/text-embedding-3-small

+```

+

+**模型格式**: `供应商/模型名称`

+

+示例:

+- `openai/gpt-4o-mini`

+- `anthropic/claude-3-5-sonnet-20241022`

+- `google/gemini-2.0-flash-exp`

+- `azure/gpt-4`

+

+完整列表请参阅 [LiteLLM Providers](https://docs.litellm.ai/docs/providers)。

+

+### 4. Ollama 配置(本地部署)

+

+使用 Ollama 运行本地 LLM,确保隐私和成本控制。

+

+#### 4.1 Chat LLM

+

+```bash

+OLLAMA_CHAT_HOST=127.0.0.1

+OLLAMA_CHAT_PORT=11434

+OLLAMA_CHAT_LANGUAGE_MODEL=llama3.1:8b

+```

+

+#### 4.2 Extract LLM

+

+```bash

+OLLAMA_EXTRACT_HOST=127.0.0.1

+OLLAMA_EXTRACT_PORT=11434

+OLLAMA_EXTRACT_LANGUAGE_MODEL=llama3.1:8b

+```

+

+#### 4.3 Text2GQL LLM

+

+```bash

+OLLAMA_TEXT2GQL_HOST=127.0.0.1

+OLLAMA_TEXT2GQL_PORT=11434

+OLLAMA_TEXT2GQL_LANGUAGE_MODEL=qwen2.5-coder:7b

+```

+

+#### 4.4 嵌入模型

+

+```bash

+OLLAMA_EMBEDDING_HOST=127.0.0.1

+OLLAMA_EMBEDDING_PORT=11434

+OLLAMA_EMBEDDING_MODEL=nomic-embed-text

+```

+

+> [!TIP]

+> 下载模型:`ollama pull llama3.1:8b` 或 `ollama pull qwen2.5-coder:7b`

+

+### 5. Reranker 配置

+

+Reranker 通过根据相关性重新排序检索结果来提高 RAG 准确性。

+

+#### 5.1 Cohere Reranker

+

+```bash

+RERANKER_TYPE=cohere

+COHERE_BASE_URL=https://api.cohere.com/v1/rerank

+RERANKER_API_KEY=your-cohere-api-key

+RERANKER_MODEL=rerank-english-v3.0

+```

+

+可用模型:

+- `rerank-english-v3.0`(英文)

+- `rerank-multilingual-v3.0`(100+ 种语言)

+

+#### 5.2 SiliconFlow Reranker

+

+```bash

+RERANKER_TYPE=siliconflow

+RERANKER_API_KEY=your-siliconflow-api-key

+RERANKER_MODEL=BAAI/bge-reranker-v2-m3

+```

+

+### 6. HugeGraph 连接

+

+配置与 HugeGraph 服务器实例的连接。

+

+```bash

+# 服务器连接

+GRAPH_IP=127.0.0.1

+GRAPH_PORT=8080

+GRAPH_NAME=hugegraph # 图实例名称

+GRAPH_USER=admin # 用户名

+GRAPH_PWD=admin-password # 密码

+GRAPH_SPACE= # 图空间(可选,用于多租户)

+```

+

+### 7. 查询参数

+

+控制图遍历行为和结果限制。

+

+```bash

+# 图遍历限制

+MAX_GRAPH_PATH=10 # 图查询的最大路径深度

+MAX_GRAPH_ITEMS=30 # 从图中检索的最大项数

+EDGE_LIMIT_PRE_LABEL=8 # 每个标签类型的最大边数

+

+# 属性过滤

+LIMIT_PROPERTY=False # 限制结果中的属性(True/False)

+```

+

+### 8. 向量搜索配置

+

+配置向量相似性搜索参数。

+

+```bash

+# 向量搜索阈值

+VECTOR_DIS_THRESHOLD=0.9 # 最小余弦相似度(0-1,越高越严格)

+TOPK_PER_KEYWORD=1 # 每个提取关键词的 Top-K 结果

+```

+

+### 9. Rerank 配置

+

+```bash

+# Rerank 结果限制

+TOPK_RETURN_RESULTS=20 # 重排序后的 top 结果数

+```

+

+## 配置优先级

+

+系统按以下顺序加载配置(后面的来源覆盖前面的):

+

+1. **默认值**(在 `*_config.py` 文件中)

+2. **环境变量**(来自 `.env` 文件)

+3. **运行时更新**(通过 Web UI 或 API 调用)

+

+## 配置示例

+

+### 最小配置(OpenAI)

+

+```bash

+# 语言

+LANGUAGE=EN

+

+# LLM 类型

+CHAT_LLM_TYPE=openai

+EXTRACT_LLM_TYPE=openai

+TEXT2GQL_LLM_TYPE=openai

+EMBEDDING_TYPE=openai

+

+# OpenAI 凭据(所有任务共用一个密钥)

+OPENAI_API_BASE=https://api.openai.com/v1

+OPENAI_API_KEY=sk-your-api-key-here

+OPENAI_LANGUAGE_MODEL=gpt-4o-mini

+OPENAI_EMBEDDING_MODEL=text-embedding-3-small

+

+# HugeGraph 连接

+GRAPH_IP=127.0.0.1

+GRAPH_PORT=8080

+GRAPH_NAME=hugegraph

+GRAPH_USER=admin

+GRAPH_PWD=admin

+```

+

+### 生产环境配置(LiteLLM + Reranker)

+

+```bash

+# 双语支持

+LANGUAGE=EN

+

+# 灵活使用 LiteLLM

+CHAT_LLM_TYPE=litellm

+EXTRACT_LLM_TYPE=litellm

+TEXT2GQL_LLM_TYPE=litellm

+EMBEDDING_TYPE=litellm

+

+# LiteLLM 代理

+LITELLM_CHAT_API_BASE=http://localhost:4000

+LITELLM_CHAT_API_KEY=sk-litellm-master-key

+LITELLM_CHAT_LANGUAGE_MODEL=anthropic/claude-3-5-sonnet-20241022

+LITELLM_CHAT_TOKENS=8192

+

+LITELLM_EXTRACT_API_BASE=http://localhost:4000

+LITELLM_EXTRACT_API_KEY=sk-litellm-master-key

+LITELLM_EXTRACT_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_EXTRACT_TOKENS=256

+

+LITELLM_TEXT2GQL_API_BASE=http://localhost:4000

+LITELLM_TEXT2GQL_API_KEY=sk-litellm-master-key

+LITELLM_TEXT2GQL_LANGUAGE_MODEL=openai/gpt-4o-mini

+LITELLM_TEXT2GQL_TOKENS=4096

+

+LITELLM_EMBEDDING_API_BASE=http://localhost:4000

+LITELLM_EMBEDDING_API_KEY=sk-litellm-master-key

+LITELLM_EMBEDDING_MODEL=openai/text-embedding-3-small

+

+# Cohere Reranker 提高准确性

+RERANKER_TYPE=cohere

+COHERE_BASE_URL=https://api.cohere.com/v1/rerank

+RERANKER_API_KEY=your-cohere-key

+RERANKER_MODEL=rerank-multilingual-v3.0

+

+# 带认证的 HugeGraph

+GRAPH_IP=prod-hugegraph.example.com

+GRAPH_PORT=8080

+GRAPH_NAME=production_graph

+GRAPH_USER=rag_user

+GRAPH_PWD=secure-password

+GRAPH_SPACE=prod_space

+

+# 优化的查询参数

+MAX_GRAPH_PATH=15

+MAX_GRAPH_ITEMS=50

+VECTOR_DIS_THRESHOLD=0.85

+TOPK_RETURN_RESULTS=30

+```

+

+### 本地/离线配置(Ollama)

+

+```bash

+# 语言

+LANGUAGE=EN

+

+# 全部通过 Ollama 使用本地模型

+CHAT_LLM_TYPE=ollama/local

+EXTRACT_LLM_TYPE=ollama/local

+TEXT2GQL_LLM_TYPE=ollama/local

+EMBEDDING_TYPE=ollama/local

+

+# Ollama 端点

+OLLAMA_CHAT_HOST=127.0.0.1

+OLLAMA_CHAT_PORT=11434

+OLLAMA_CHAT_LANGUAGE_MODEL=llama3.1:8b

+

+OLLAMA_EXTRACT_HOST=127.0.0.1

+OLLAMA_EXTRACT_PORT=11434

+OLLAMA_EXTRACT_LANGUAGE_MODEL=llama3.1:8b

+

+OLLAMA_TEXT2GQL_HOST=127.0.0.1

+OLLAMA_TEXT2GQL_PORT=11434

+OLLAMA_TEXT2GQL_LANGUAGE_MODEL=qwen2.5-coder:7b

+

+OLLAMA_EMBEDDING_HOST=127.0.0.1

+OLLAMA_EMBEDDING_PORT=11434

+OLLAMA_EMBEDDING_MODEL=nomic-embed-text

+

+# 离线环境不使用 reranker

+RERANKER_TYPE=

+

+# 本地 HugeGraph

+GRAPH_IP=127.0.0.1

+GRAPH_PORT=8080

+GRAPH_NAME=hugegraph

+GRAPH_USER=admin

+GRAPH_PWD=admin

+```

+

+## 配置验证

+

+修改 `.env` 后,验证配置:

+

+1. **通过 Web UI**:访问 `http://localhost:8001` 并检查设置面板

+2. **通过 Python**:

+```python

+from hugegraph_llm.config import settings

+print(settings.llm_config)

+print(settings.hugegraph_config)

+```

+3. **通过 REST API**:

+```bash

+curl http://localhost:8001/config

+```

+

+## 故障排除

+

+| 问题 | 解决方案 |

+|------|---------|

+| "API key not found" | 检查 `.env` 中的 `*_API_KEY` 是否正确设置 |

+| "Connection refused" | 验证 `GRAPH_IP` 和 `GRAPH_PORT` 是否正确 |

+| "Model not found" | 对于 Ollama:运行 `ollama pull <模型名称>` |

+| "Rate limit exceeded" | 减少 `MAX_GRAPH_ITEMS` 或使用不同的 API 密钥 |

+| "Embedding dimension mismatch" | 删除现有向量并使用正确模型重建 |

+

+## 另见

+

+- [HugeGraph-LLM 概述](./hugegraph-llm.md)

+- [REST API 参考](./rest-api.md)

+- [快速入门指南](./quick_start.md)

diff --git a/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md

index b353a8fba..d376b3de1 100644

--- a/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md

+++ b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-llm.md

@@ -4,11 +4,11 @@ linkTitle: "HugeGraph-LLM"

weight: 1

---

-> 本文为中文翻译版本,内容基于英文版进行,我们欢迎您随时提出修改建议。我们推荐您阅读 [AI 仓库 README](https://github.com/apache/incubator-hugegraph-ai/tree/main/hugegraph-llm#readme) 以获取最新信息,官网会定期同步更新。

+> 本文为中文翻译版本,内容基于英文版进行,我们欢迎您随时提出修改建议。我们推荐您阅读 [AI 仓库 README](https://github.com/apache/hugegraph-ai/tree/main/hugegraph-llm#readme) 以获取最新信息,官网会定期同步更新。

> **连接图数据库与大语言模型的桥梁**

-> AI 总结项目文档:[](https://deepwiki.com/apache/incubator-hugegraph-ai)

+> AI 总结项目文档:[](https://deepwiki.com/apache/hugegraph-ai)

## 🎯 概述

@@ -19,7 +19,7 @@ HugeGraph-LLM 是一个功能强大的工具包,它融合了图数据库和大

- 🗣️ **自然语言查询**:通过自然语言(Gremlin/Cypher)操作图数据库。

- 🔍 **图增强 RAG**:借助知识图谱提升问答准确性(GraphRAG 和 Graph Agent)。

-更多源码文档,请访问我们的 [DeepWiki](https://deepwiki.com/apache/incubator-hugegraph-ai) 页面(推荐)。

+更多源码文档,请访问我们的 [DeepWiki](https://deepwiki.com/apache/hugegraph-ai) 页面(推荐)。

## 📋 环境要求

@@ -90,8 +90,8 @@ docker run -itd --name=server -p 8080:8080 hugegraph/hugegraph

curl -LsSf https://astral.sh/uv/install.sh | sh

# 3. 克隆并设置项目

-git clone https://github.com/apache/incubator-hugegraph-ai.git

-cd incubator-hugegraph-ai/hugegraph-llm

+git clone https://github.com/apache/hugegraph-ai.git

+cd hugegraph-ai/hugegraph-llm

# 4. 创建虚拟环境并安装依赖

uv venv && source .venv/bin/activate

@@ -116,7 +116,7 @@ python -m hugegraph_llm.config.generate --update

```

> [!TIP]

-> 查看我们的[快速入门指南](https://github.com/apache/incubator-hugegraph-ai/blob/main/hugegraph-llm/quick_start.md)获取详细用法示例和查询逻辑解释。

+> 查看我们的[快速入门指南](https://github.com/apache/hugegraph-ai/blob/main/hugegraph-llm/quick_start.md)获取详细用法示例和查询逻辑解释。

## 💡 用法示例

@@ -131,7 +131,7 @@ python -m hugegraph_llm.config.generate --update

- **文件**:上传 TXT 或 DOCX 文件(支持多选)

**Schema 配置:**

-- **自定义 Schema**:遵循我们[模板](https://github.com/apache/incubator-hugegraph-ai/blob/aff3bbe25fa91c3414947a196131be812c20ef11/hugegraph-llm/src/hugegraph_llm/config/config_data.py#L125)的 JSON 格式

+- **自定义 Schema**:遵循我们[模板](https://github.com/apache/hugegraph-ai/blob/aff3bbe25fa91c3414947a196131be812c20ef11/hugegraph-llm/src/hugegraph_llm/config/config_data.py#L125)的 JSON 格式

- **HugeGraph Schema**:使用现有图实例的 Schema(例如,“hugegraph”)

@@ -214,7 +214,7 @@ graph TD

## 🔧 配置

-运行演示后,将自动生成配置文件:

+运行演示后,将自动生成配置文件:

- **环境**:`hugegraph-llm/.env`

- **提示**:`hugegraph-llm/src/hugegraph_llm/resources/demo/config_prompt.yaml`

@@ -222,7 +222,80 @@ graph TD

> [!NOTE]

> 使用 Web 界面时,配置更改会自动保存。对于手动更改,刷新页面即可加载更新。

-**LLM 提供商支持**:本项目使用 [LiteLLM](https://docs.litellm.ai/docs/providers) 实现多提供商 LLM 支持。

+### LLM 提供商配置

+

+本项目使用 [LiteLLM](https://docs.litellm.ai/docs/providers) 实现多提供商 LLM 支持,可统一访问 OpenAI、Anthropic、Google、Cohere 以及 100 多个其他提供商。

+

+#### 方案一:直接 LLM 连接(OpenAI、Ollama)

+

+```bash

+# .env 配置

+chat_llm_type=openai # 或 ollama/local

+openai_api_key=sk-xxx

+openai_api_base=https://api.openai.com/v1

+openai_language_model=gpt-4o-mini

+openai_max_tokens=4096

+```

+

+#### 方案二:LiteLLM 多提供商支持

+

+LiteLLM 作为多个 LLM 提供商的统一代理:

+

+```bash

+# .env 配置

+chat_llm_type=litellm

+extract_llm_type=litellm

+text2gql_llm_type=litellm

+

+# LiteLLM 设置

+litellm_api_base=http://localhost:4000 # LiteLLM 代理服务器

+litellm_api_key=sk-1234 # LiteLLM API 密钥

+

+# 模型选择(提供商/模型格式)

+litellm_language_model=anthropic/claude-3-5-sonnet-20241022

+litellm_max_tokens=4096

+```

+

+**支持的提供商**:OpenAI、Anthropic、Google(Gemini)、Azure、Cohere、Bedrock、Vertex AI、Hugging Face 等。

+

+完整提供商列表和配置详情,请访问 [LiteLLM Providers](https://docs.litellm.ai/docs/providers)。

+

+### Reranker 配置

+

+Reranker 通过重新排序检索结果来提高 RAG 准确性。支持的提供商:

+

+```bash

+# Cohere Reranker

+reranker_type=cohere

+cohere_api_key=your-cohere-key

+cohere_rerank_model=rerank-english-v3.0

+

+# SiliconFlow Reranker

+reranker_type=siliconflow

+siliconflow_api_key=your-siliconflow-key

+siliconflow_rerank_model=BAAI/bge-reranker-v2-m3

+```

+

+### Text2Gremlin 配置

+

+将自然语言转换为 Gremlin 查询:

+

+```python

+from hugegraph_llm.operators.graph_rag_task import Text2GremlinPipeline

+

+# 初始化工作流

+text2gremlin = Text2GremlinPipeline()

+

+# 生成 Gremlin 查询

+result = (

+ text2gremlin

+ .query_to_gremlin(query="查找所有由 Francis Ford Coppola 执导的电影")

+ .execute_gremlin_query()

+ .run()

+)

+```

+

+**REST API 端点**:有关 HTTP 端点详情,请参阅 [REST API 文档](./rest-api.md)。

## 📚 其他资源

diff --git a/content/cn/docs/quickstart/hugegraph-ai/hugegraph-ml.md b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-ml.md

new file mode 100644

index 000000000..baf0481f0

--- /dev/null

+++ b/content/cn/docs/quickstart/hugegraph-ai/hugegraph-ml.md

@@ -0,0 +1,289 @@

+---

+title: "HugeGraph-ML"

+linkTitle: "HugeGraph-ML"

+weight: 2

+---

+

+HugeGraph-ML 将 HugeGraph 与流行的图学习库集成,支持直接在图数据上进行端到端的机器学习工作流。

+

+## 概述

+

+`hugegraph-ml` 提供了统一接口,用于将图神经网络和机器学习算法应用于存储在 HugeGraph 中的数据。它通过无缝转换 HugeGraph 数据到主流 ML 框架兼容格式,消除了复杂的数据导出/导入流程。

+

+### 核心功能

+

+- **直接 HugeGraph 集成**:无需手动导出即可直接从 HugeGraph 查询图数据

+- **21 种算法实现**:全面覆盖节点分类、图分类、嵌入和链接预测

+- **DGL 后端**:利用深度图库(DGL)进行高效训练

+- **端到端工作流**:从数据加载到模型训练和评估

+- **模块化任务**:可复用的常见 ML 场景任务抽象

+

+## 环境要求

+

+- **Python**:3.9+(独立模块)

+- **HugeGraph Server**:1.0+(推荐:1.5+)

+- **UV 包管理器**:0.7+(用于依赖管理)

+

+## 安装

+

+### 1. 启动 HugeGraph Server

+

+```bash

+# 方案一:Docker(推荐)

+docker run -itd --name=hugegraph -p 8080:8080 hugegraph/hugegraph

+

+# 方案二:二进制包

+# 参见 https://hugegraph.apache.org/docs/download/download/

+```

+

+### 2. 克隆并设置

+

+```bash

+git clone https://github.com/apache/hugegraph-ai.git

+cd hugegraph-ai/hugegraph-ml

+```

+

+### 3. 安装依赖

+

+```bash

+# uv sync 自动创建 .venv 并安装所有依赖

+uv sync

+

+# 激活虚拟环境

+source .venv/bin/activate

+```

+

+### 4. 导航到源代码目录

+

+```bash

+cd ./src

+```

+

+> [!NOTE]

+> 所有示例均假定您在已激活的虚拟环境中。

+

+## 已实现算法

+

+HugeGraph-ML 目前实现了跨多个类别的 **21 种图机器学习算法**:

+

+### 节点分类(11 种算法)

+

+基于网络结构和特征预测图节点的标签。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **GCN** | [Kipf & Welling, 2017](https://arxiv.org/abs/1609.02907) | 图卷积网络 |

+| **GAT** | [Veličković et al., 2018](https://arxiv.org/abs/1710.10903) | 图注意力网络 |

+| **GraphSAGE** | [Hamilton et al., 2017](https://arxiv.org/abs/1706.02216) | 归纳式表示学习 |

+| **APPNP** | [Klicpera et al., 2019](https://arxiv.org/abs/1810.05997) | 个性化 PageRank 传播 |

+| **AGNN** | [Thekumparampil et al., 2018](https://arxiv.org/abs/1803.03735) | 基于注意力的 GNN |

+| **ARMA** | [Bianchi et al., 2019](https://arxiv.org/abs/1901.01343) | 自回归移动平均滤波器 |

+| **DAGNN** | [Liu et al., 2020](https://arxiv.org/abs/2007.09296) | 深度自适应图神经网络 |

+| **DeeperGCN** | [Li et al., 2020](https://arxiv.org/abs/2006.07739) | 非常深的 GCN 架构 |

+| **GRAND** | [Feng et al., 2020](https://arxiv.org/abs/2005.11079) | 图随机神经网络 |

+| **JKNet** | [Xu et al., 2018](https://arxiv.org/abs/1806.03536) | 跳跃知识网络 |

+| **Cluster-GCN** | [Chiang et al., 2019](https://arxiv.org/abs/1905.07953) | 通过聚类实现可扩展 GCN 训练 |

+

+### 图分类(2 种算法)

+

+基于结构和节点特征对整个图进行分类。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **DiffPool** | [Ying et al., 2018](https://arxiv.org/abs/1806.08804) | 可微分图池化 |

+| **GIN** | [Xu et al., 2019](https://arxiv.org/abs/1810.00826) | 图同构网络 |

+

+### 图嵌入(3 种算法)

+

+学习用于下游任务的无监督节点表示。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **DGI** | [Veličković et al., 2019](https://arxiv.org/abs/1809.10341) | 深度图信息最大化(对比学习) |

+| **BGRL** | [Thakoor et al., 2021](https://arxiv.org/abs/2102.06514) | 自举图表示学习 |

+| **GRACE** | [Zhu et al., 2020](https://arxiv.org/abs/2006.04131) | 图对比学习 |

+

+### 链接预测(3 种算法)

+

+预测图中缺失或未来的连接。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **SEAL** | [Zhang & Chen, 2018](https://arxiv.org/abs/1802.09691) | 子图提取和标注 |

+| **P-GNN** | [You et al., 2019](http://proceedings.mlr.press/v97/you19b/you19b.pdf) | 位置感知 GNN |

+| **GATNE** | [Cen et al., 2019](https://arxiv.org/abs/1905.01669) | 属性多元异构网络嵌入 |

+

+### 欺诈检测(2 种算法)

+

+检测图中的异常节点(例如欺诈账户)。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **CARE-GNN** | [Dou et al., 2020](https://arxiv.org/abs/2008.08692) | 抗伪装 GNN |

+| **BGNN** | [Zheng et al., 2021](https://arxiv.org/abs/2101.08543) | 二部图神经网络 |

+

+### 后处理(1 种算法)

+

+通过标签传播改进预测。

+

+| 算法 | 论文 | 描述 |

+|-----|------|------|

+| **C&S** | [Huang et al., 2020](https://arxiv.org/abs/2010.13993) | 校正与平滑(预测优化) |

+

+## 使用示例

+

+### 示例 1:使用 DGI 进行节点嵌入

+

+使用深度图信息最大化(DGI)在 Cora 数据集上进行无监督节点嵌入。

+

+#### 步骤 1:导入数据集(如需)

+

+```python

+from hugegraph_ml.utils.dgl2hugegraph_utils import import_graph_from_dgl

+

+# 从 DGL 导入 Cora 数据集到 HugeGraph

+import_graph_from_dgl("cora")

+```

+

+#### 步骤 2:转换图数据

+

+```python

+from hugegraph_ml.data.hugegraph2dgl import HugeGraph2DGL

+

+# 将 HugeGraph 数据转换为 DGL 格式

+hg2d = HugeGraph2DGL()

+graph = hg2d.convert_graph(vertex_label="CORA_vertex", edge_label="CORA_edge")

+```

+

+#### 步骤 3:初始化模型

+

+```python

+from hugegraph_ml.models.dgi import DGI

+

+# 创建 DGI 模型

+model = DGI(n_in_feats=graph.ndata["feat"].shape[1])

+```

+

+#### 步骤 4:训练并生成嵌入

+

+```python

+from hugegraph_ml.tasks.node_embed import NodeEmbed

+

+# 训练模型并生成节点嵌入

+node_embed_task = NodeEmbed(graph=graph, model=model)

+embedded_graph = node_embed_task.train_and_embed(

+ add_self_loop=True,

+ n_epochs=300,

+ patience=30

+)

+```

+

+#### 步骤 5:下游任务(节点分类)

+

+```python

+from hugegraph_ml.models.mlp import MLPClassifier

+from hugegraph_ml.tasks.node_classify import NodeClassify

+

+# 使用嵌入进行节点分类

+model = MLPClassifier(

+ n_in_feat=embedded_graph.ndata["feat"].shape[1],

+ n_out_feat=embedded_graph.ndata["label"].unique().shape[0]

+)

+node_clf_task = NodeClassify(graph=embedded_graph, model=model)

+node_clf_task.train(lr=1e-3, n_epochs=400, patience=40)

+print(node_clf_task.evaluate())

+```

+

+**预期输出:**

+```python

+{'accuracy': 0.82, 'loss': 0.5714246034622192}

+```

+

+**完整示例**:参见 [dgi_example.py](https://github.com/apache/hugegraph-ai/blob/main/hugegraph-ml/src/hugegraph_ml/examples/dgi_example.py)

+

+### 示例 2:使用 GRAND 进行节点分类

+

+使用 GRAND 模型直接对节点进行分类(无需单独的嵌入步骤)。

+

+```python

+from hugegraph_ml.data.hugegraph2dgl import HugeGraph2DGL

+from hugegraph_ml.models.grand import GRAND

+from hugegraph_ml.tasks.node_classify import NodeClassify

+

+# 加载图

+hg2d = HugeGraph2DGL()

+graph = hg2d.convert_graph(vertex_label="CORA_vertex", edge_label="CORA_edge")

+

+# 初始化 GRAND 模型

+model = GRAND(

+ n_in_feats=graph.ndata["feat"].shape[1],

+ n_out_feats=graph.ndata["label"].unique().shape[0]

+)

+

+# 训练和评估

+node_clf_task = NodeClassify(graph=graph, model=model)

+node_clf_task.train(lr=1e-2, n_epochs=1500, patience=100)

+print(node_clf_task.evaluate())

+```

+

+**完整示例**:参见 [grand_example.py](https://github.com/apache/hugegraph-ai/blob/main/hugegraph-ml/src/hugegraph_ml/examples/grand_example.py)

+

+## 核心组件

+

+### HugeGraph2DGL 转换器

+

+无缝将 HugeGraph 数据转换为 DGL 图格式:

+

+```python

+from hugegraph_ml.data.hugegraph2dgl import HugeGraph2DGL

+

+hg2d = HugeGraph2DGL()

+graph = hg2d.convert_graph(

+ vertex_label="person", # 要提取的顶点标签

+ edge_label="knows", # 要提取的边标签

+ directed=False # 图的方向性

+)

+```

+

+### 任务抽象

+

+用于常见 ML 工作流的可复用任务对象:

+

+| 任务 | 类 | 用途 |

+|-----|-----|------|

+| 节点嵌入 | `NodeEmbed` | 生成无监督节点嵌入 |

+| 节点分类 | `NodeClassify` | 预测节点标签 |

+| 图分类 | `GraphClassify` | 预测图级标签 |

+| 链接预测 | `LinkPredict` | 预测缺失边 |

+

+## 最佳实践

+

+1. **从小数据集开始**:在扩展之前先在小图(例如 Cora、Citeseer)上测试您的流程

+2. **使用早停**:设置 `patience` 参数以避免过拟合

+3. **调整超参数**:根据数据集大小调整学习率、隐藏维度和周期数

+4. **监控 GPU 内存**:大图可能需要批量训练(例如 Cluster-GCN)

+5. **验证 Schema**:确保顶点/边标签与您的 HugeGraph schema 匹配

+

+## 故障排除

+

+| 问题 | 解决方案 |

+|-----|---------|

+| 连接 HugeGraph "Connection refused" | 验证服务器是否在 8080 端口运行 |

+| CUDA 内存不足 | 减少批大小或使用仅 CPU 模式 |

+| 模型收敛问题 | 尝试不同的学习率(1e-2、1e-3、1e-4) |

+| DGL 的 ImportError | 运行 `uv sync` 重新安装依赖 |

+

+## 贡献

+

+添加新算法:

+

+1. 在 `src/hugegraph_ml/models/your_model.py` 创建模型文件

+2. 继承基础模型类并实现 `forward()` 方法

+3. 在 `src/hugegraph_ml/examples/` 添加示例脚本

+4. 更新此文档并添加算法详情

+

+## 另见

+

+- [HugeGraph-AI 概述](../_index.md) - 完整 AI 生态系统

+- [HugeGraph-LLM](./hugegraph-llm.md) - RAG 和知识图谱构建

+- [GitHub 仓库](https://github.com/apache/hugegraph-ai/tree/main/hugegraph-ml) - 源代码和示例

diff --git a/content/cn/docs/quickstart/hugegraph-ai/quick_start.md b/content/cn/docs/quickstart/hugegraph-ai/quick_start.md

index 6d8d22f90..da148f7e7 100644

--- a/content/cn/docs/quickstart/hugegraph-ai/quick_start.md

+++ b/content/cn/docs/quickstart/hugegraph-ai/quick_start.md

@@ -190,3 +190,63 @@ graph TD;

# 5. 图工具

输入 Gremlin 查询以执行相应操作。

+

+# 6. 语言切换 (v1.5.0+)

+

+HugeGraph-LLM 支持双语提示词,以提高跨语言的准确性。

+

+### 在英文和中文之间切换

+

+系统语言影响:

+- **系统提示词**:LLM 使用的内部提示词

+- **关键词提取**:特定语言的提取逻辑

+- **答案生成**:响应格式和风格

+

+#### 配置方法一:环境变量

+

+编辑您的 `.env` 文件:

+

+```bash

+# 英文提示词(默认)

+LANGUAGE=EN

+

+# 中文提示词

+LANGUAGE=CN

+```

+

+更改语言设置后重启服务。

+

+#### 配置方法二:Web UI(动态)

+

+如果您的部署中可用,使用 Web UI 中的设置面板切换语言,无需重启:

+

+1. 导航到**设置**或**配置**选项卡

+2. 选择**语言**:`EN` 或 `CN`

+3. 点击**保存** - 更改立即生效

+

+#### 特定语言的行为

+

+| 语言 | 关键词提取 | 答案风格 | 使用场景 |

+|-----|-----------|---------|---------|

+| `EN` | 英文 NLP 模型 | 专业、简洁 | 国际用户、英文文档 |

+| `CN` | 中文 NLP 模型 | 自然的中文表达 | 中文用户、中文文档 |

+

+> [!TIP]

+> 将 `LANGUAGE` 设置与您的主要文档语言匹配,以获得最佳 RAG 准确性。

+

+### REST API 语言覆盖

+

+使用 REST API 时,您可以为每个请求指定自定义提示词,以覆盖默认语言设置:

+

+```bash

+curl -X POST http://localhost:8001/rag \

+ -H "Content-Type: application/json" \

+ -d '{

+ "query": "告诉我关于阿尔·帕西诺的信息",

+ "graph_only": true,

+ "keywords_extract_prompt": "请从以下文本中提取关键实体...",

+ "answer_prompt": "请根据以下上下文回答问题..."

+ }'

+```

+

+完整参数详情请参阅 [REST API 参考](./rest-api.md)。

diff --git a/content/cn/docs/quickstart/hugegraph-ai/rest-api.md b/content/cn/docs/quickstart/hugegraph-ai/rest-api.md

new file mode 100644

index 000000000..349ff4c06

--- /dev/null

+++ b/content/cn/docs/quickstart/hugegraph-ai/rest-api.md

@@ -0,0 +1,428 @@

+---

+title: "REST API 参考"

+linkTitle: "REST API"

+weight: 5

+---

+

+HugeGraph-LLM 提供 REST API 端点,用于将 RAG 和 Text2Gremlin 功能集成到您的应用程序中。

+

+## 基础 URL

+

+```

+http://localhost:8001

+```

+

+启动服务时更改主机/端口:

+```bash

+python -m hugegraph_llm.demo.rag_demo.app --host 127.0.0.1 --port 8001

+```

+

+## 认证

+

+目前 API 支持可选的基于令牌的认证:

+

+```bash

+# 在 .env 中启用认证

+ENABLE_LOGIN=true

+USER_TOKEN=your-user-token

+ADMIN_TOKEN=your-admin-token

+```

+

+在请求头中传递令牌:

+```bash

+Authorization: Bearer 点击展开/折叠 MySQL 配置及启动方法

@@ -410,6 +437,8 @@ Connecting to HugeGraphServer (http://127.0.0.1:8080/graphs)....OK ##### 5.1.5 Cassandra +> ⚠️ **已废弃**: 此后端从 HugeGraph 1.7.0 版本开始已移除。如需使用,请参考 1.5.x 版本文档。 +点击展开/折叠 Cassandra 配置及启动方法

@@ -495,6 +524,8 @@ Connecting to HugeGraphServer (http://127.0.0.1:8080/graphs)....OK ##### 5.1.7 ScyllaDB +> ⚠️ **已废弃**: 此后端从 HugeGraph 1.7.0 版本开始已移除。如需使用,请参考 1.5.x 版本文档。 +点击展开/折叠 ScyllaDB 配置及启动方法

@@ -563,12 +594,14 @@ Connecting to HugeGraphServer (http://127.0.0.1:8080/graphs)......OK ##### 5.2.1 使用 Cassandra 作为后端 +> ⚠️ **已废弃**: Cassandra 后端从 HugeGraph 1.7.0 版本开始已移除。如需使用,请参考 1.5.x 版本文档。 +点击展开/折叠 Cassandra 配置及启动方法

在使用 Docker 的时候,我们可以使用 Cassandra 作为后端存储。我们更加推荐直接使用 docker-compose 来对于 server 以及 Cassandra 进行统一管理 -样例的 `docker-compose.yml` 可以在 [github](https://github.com/apache/incubator-hugegraph/blob/master/hugegraph-server/hugegraph-dist/docker/example/docker-compose-cassandra.yml) 中获取,使用 `docker-compose up -d` 启动。(如果使用 cassandra 4.0 版本作为后端存储,则需要大约两个分钟初始化,请耐心等待) +样例的 `docker-compose.yml` 可以在 [github](https://github.com/apache/hugegraph/blob/master/hugegraph-server/hugegraph-dist/docker/example/docker-compose-cassandra.yml) 中获取,使用 `docker-compose up -d` 启动。(如果使用 cassandra 4.0 版本作为后端存储,则需要大约两个分钟初始化,请耐心等待) ```yaml version: "3" @@ -631,17 +664,17 @@ volumes: 1. 使用`docker run` - 使用 `docker run -itd --name=server -p 8080:8080 -e PRELOAD=true hugegraph/hugegraph:1.5.0` + 使用 `docker run -itd --name=server -p 8080:8080 -e PRELOAD=true hugegraph/hugegraph:1.7.0` 2. 使用`docker-compose` - 创建`docker-compose.yml`,具体文件如下,在环境变量中设置 PRELOAD=true。其中,[`example.groovy`](https://github.com/apache/incubator-hugegraph/blob/master/hugegraph-server/hugegraph-dist/src/assembly/static/scripts/example.groovy) 是一个预定义的脚本,用于预加载样例数据。如果有需要,可以通过挂载新的 `example.groovy` 脚本改变预加载的数据。 + 创建`docker-compose.yml`,具体文件如下,在环境变量中设置 PRELOAD=true。其中,[`example.groovy`](https://github.com/apache/hugegraph/blob/master/hugegraph-server/hugegraph-dist/src/assembly/static/scripts/example.groovy) 是一个预定义的脚本,用于预加载样例数据。如果有需要,可以通过挂载新的 `example.groovy` 脚本改变预加载的数据。 ```yaml version: '3' services: server: - image: hugegraph/hugegraph:1.5.0 + image: hugegraph/hugegraph:1.7.0 container_name: server environment: - PRELOAD=true diff --git a/content/cn/docs/quickstart/toolchain/_index.md b/content/cn/docs/quickstart/toolchain/_index.md index 776d935b2..6b318fa74 100644 --- a/content/cn/docs/quickstart/toolchain/_index.md +++ b/content/cn/docs/quickstart/toolchain/_index.md @@ -6,8 +6,8 @@ weight: 2 > **测试指南**:如需在本地运行工具链测试,请参考 [HugeGraph 工具链本地测试指南](/cn/docs/guides/toolchain-local-test) -## 🚀 最佳实践:优先使用 DeepWiki 智能文档 +> DeepWiki 提供实时更新的项目文档,内容更全面准确,适合快速了解项目最新情况。 +> +> 📖 [https://deepwiki.com/apache/hugegraph-toolchain](https://deepwiki.com/apache/hugegraph-toolchain) -> 为解决静态文档可能过时的问题,我们提供了 **实时更新、内容更全面** 的 DeepWiki。它相当于一个拥有项目最新知识的专家,非常适合**所有开发者**在开始项目前阅读和咨询。 - -**👉 强烈推荐访问并对话:**[**incubator-hugegraph-toolchain**](https://deepwiki.com/apache/incubator-hugegraph-toolchain) +**GitHub 访问:** [https://github.com/apache/hugegraph-toolchain](https://github.com/apache/hugegraph-toolchain) diff --git a/content/cn/docs/quickstart/toolchain/hugegraph-hubble.md b/content/cn/docs/quickstart/toolchain/hugegraph-hubble.md index 2167c0a72..51adbc0bf 100644 --- a/content/cn/docs/quickstart/toolchain/hugegraph-hubble.md +++ b/content/cn/docs/quickstart/toolchain/hugegraph-hubble.md @@ -90,7 +90,7 @@ services: `hubble`项目在`toolchain`项目中,首先下载`toolchain`的 tar 包 ```bash -wget https://downloads.apache.org/incubator/hugegraph/{version}/apache-hugegraph-toolchain-incubating-{version}.tar.gz +wget https://downloads.apache.org/hugegraph/{version}/apache-hugegraph-toolchain-incubating-{version}.tar.gz tar -xvf apache-hugegraph-toolchain-incubating-{version}.tar.gz cd apache-hugegraph-toolchain-incubating-{version}.tar.gz/apache-hugegraph-hubble-incubating-{version} ``` @@ -551,3 +551,26 @@ Hubble 上暂未提供可视化的 OLAP 算法执行,可调用 RESTful API 进

Apache HugeGraph

-

- Incubating

HugeGraph is a convenient, efficient, and adaptable graph database

-compatible with the Apache TinkerPop3 framework and the Gremlin query language.

+HugeGraph is a full-stack graph system covering }}">graph database, }}">graph computing, and }}">graph AI.

+It provides complete graph data processing capabilities from storage and real-time querying to offline analysis, and supports both }}">Gremlin and }}">Cypher query languages.

{{< blocks/link-down color="info" >}} {{< /blocks/cover >}} {{% blocks/lead color="primary" %}} -HugeGraph supports fast import performance in the case of more than 10 billion Vertices and Edges

-Graph, millisecond-level OLTP query capability, and large-scale distributed

-graph processing (OLAP). The main scenarios of HugeGraph include

-correlation search, fraud detection, and knowledge graph.

- +HugeGraph supports high-speed import and millisecond-level real-time queries for up to hundreds of billions of graph data, with deep integration with big data platforms like Spark and Flink, and can use the }}">HugeGraph toolchain for data import, visualization, and operations.

+In the AI era, combined with Large Language Models (LLMs), it provides powerful graph computing capabilities for intelligent Q&A, recommendation systems, fraud detection, and knowledge graph applications.

{{% /blocks/lead %}} {{< blocks/section color="dark" >}} {{% blocks/feature icon="fa-lightbulb" title="Convenient" %}} -Not only supports Gremlin graph query language and RESTful API but also provides commonly used graph algorithm APIs. To help users easily implement various queries and analyses, HugeGraph has a full range of accessory tools, such as supporting distributed storage, data replication, scaling horizontally, and supports many built-in backends of storage engines. - - +Supports Gremlin graph query language and [**RESTful API**]({{< relref "/docs/clients/restful-api" >}}), and provides commonly used graph retrieval interfaces. It includes a complete set of supporting tools with distributed storage, data replication, horizontal scaling, and multiple built-in backend storage engines for efficient query and analysis workflows. {{% /blocks/feature %}} {{% blocks/feature icon="fa-shipping-fast" title="Efficient" %}} -Has been deeply optimized in graph storage and graph computation. It provides multiple batch import tools that can easily complete the fast-import of tens of billions of data, achieves millisecond-level response for graph retrieval through ameliorated queries, and supports concurrent online and real-time operations for thousands of users. +Deeply optimized for graph storage and graph computing, it provides [**batch import tools**]({{< relref "/docs/quickstart/toolchain/hugegraph-loader" >}}) to efficiently ingest massive data, achieves millisecond-level graph retrieval latency with optimized queries, and supports concurrent online operations for thousands of users. {{% /blocks/feature %}} {{% blocks/feature icon="fa-exchange-alt" title="Adaptable" %}} -Adapts to the Apache Gremlin standard graph query language and the Property Graph standard modeling method, and both support graph-based OLTP and OLAP schemes. Furthermore, HugeGraph can be integrated with Hadoop and Spark's big data platforms, and easily extend the back-end storage engine through plug-ins. +Supports the Apache Gremlin standard graph query language and the Property Graph modeling method, with both OLTP and [**OLAP graph computing**]({{< relref "/docs/quickstart/computing/hugegraph-computer" >}}) scenarios. It can be integrated with Hadoop and Spark and can extend backend storage engines through plug-ins. +{{% /blocks/feature %}} + + +{{% blocks/feature icon="fa-brain" title="AI-Ready" %}} +Integrates LLM with [**GraphRAG capabilities**]({{< relref "/docs/quickstart/hugegraph-ai" >}}), automated knowledge graph construction, and 20+ built-in graph machine learning algorithms to build AI-driven graph applications. +{{% /blocks/feature %}} + + +{{% blocks/feature icon="fa-expand-arrows-alt" title="Scalable" %}} +Supports horizontal scaling and distributed deployment, seamlessly migrating from standalone to PB-level clusters, and provides [**distributed storage engine**]({{< relref "/docs/quickstart/hugegraph/hugegraph-hstore" >}}) options for different scale and performance requirements. +{{% /blocks/feature %}} + + +{{% blocks/feature icon="fa-puzzle-piece" title="Open Ecosystem" %}} +Adheres to Apache TinkerPop standards, provides [**multi-language clients**]({{< relref "/docs/quickstart/client/hugegraph-client" >}}), is compatible with mainstream big data platforms, and is backed by an active and evolving community. {{% /blocks/feature %}} @@ -57,7 +65,7 @@Apache

{{< blocks/section color="blue-deep">}}

-The first graph database project in Apache

+The First Apache Foundation Top-Level Graph Project

{{< /blocks/section >}}

@@ -66,19 +74,19 @@ The first graph database project in Apache

{{< blocks/section >}}

{{% blocks/feature icon="far fa-tools" title="Get The **Toolchain**" %}}

-[It](https://github.com/apache/incubator-hugegraph-toolchain) includes graph loader & dashboard & backup tools

+[It](https://github.com/apache/hugegraph-toolchain) includes graph loader & dashboard & backup tools

{{% /blocks/feature %}}

-{{% blocks/feature icon="fab fa-github" title="Efficient" url="https://github.com/apache/incubator-hugegraph" %}}

-We do a [Pull Request](https://github.com/apache/incubator-hugegraph/pulls) contributions workflow on **GitHub**. New users are always welcome!

+{{% blocks/feature icon="fab fa-github" title="Efficient" url="https://github.com/apache/hugegraph" %}}

+We do a [Pull Request](https://github.com/apache/hugegraph/pulls) contributions workflow on **GitHub**. New users are always welcome!

{{% /blocks/feature %}}

-{{% blocks/feature icon="fab fa-weixin" title="Follow us on Wechat!" url="https://twitter.com/apache-hugegraph" %}}

-Follow the official account "HugeGraph" to get the latest news

+{{% blocks/feature icon="fab fa-slack" title="Join us on Slack!" url="https://the-asf.slack.com/archives/C059UU2FJ23" %}}

+Join the [ASF Slack channel](https://the-asf.slack.com/archives/C059UU2FJ23) for community discussions

-PS: twitter account it's on the way

+Could also follow the WeChat account "HugeGraph" for updates

{{% /blocks/feature %}}

diff --git a/content/en/blog/hugegraph-ai/agentic_graphrag.md b/content/en/blog/hugegraph-ai/agentic_graphrag.md

index ac1112871..9b92437d3 100644

--- a/content/en/blog/hugegraph-ai/agentic_graphrag.md

+++ b/content/en/blog/hugegraph-ai/agentic_graphrag.md

@@ -1,7 +1,7 @@

---

date: 2025-10-29

title: "Agentic GraphRAG"

-linkTitle: "Agentic GraphRAG"

+linkTitle: "Agentic GraphRAG: A Modular Architecture Practice"

---

# Project Background

diff --git a/content/en/blog/hugegraph/toplingdb/toplingdb-quick-start.md b/content/en/blog/hugegraph/toplingdb/toplingdb-quick-start.md

index 3393a26cd..599be5000 100644

--- a/content/en/blog/hugegraph/toplingdb/toplingdb-quick-start.md

+++ b/content/en/blog/hugegraph/toplingdb/toplingdb-quick-start.md

@@ -136,5 +136,5 @@ Caused by: org.rocksdb.RocksDBException: While lock file: rocksdb-data/data/m/LO

## Related Documentation

- [ToplingDB YAML Configuration Explained](/blog/2025/09/30/toplingdb-yaml-configuration-file/) – Understand each parameter in the config file

-- [HugeGraph Configuration Guide](/docs/config/config-option/) – Reference for core HugeGraph settings

+- [HugeGraph Configuration Guide](/docs/config/config-option) – Reference for core HugeGraph settings

- [ToplingDB GitHub Repository](https://github.com/topling/toplingdb) – Official docs and latest updates

diff --git a/content/en/community/_index.md b/content/en/community/_index.md

index cdade1630..1ae39a248 100644

--- a/content/en/community/_index.md

+++ b/content/en/community/_index.md

@@ -5,4 +5,4 @@ menu:

weight: 40

---

-

+Visit the [Project Maturity]({{< relref "maturity" >}}) assessment.

diff --git a/maturity.md b/content/en/community/maturity.md

similarity index 76%

rename from maturity.md

rename to content/en/community/maturity.md

index 6f0d7e3c2..5ff25f46e 100644

--- a/maturity.md

+++ b/content/en/community/maturity.md

@@ -1,4 +1,10 @@

-# Maturity Assessment for Apache HugeGraph (incubating)

+---

+title: Maturity

+description: Apache HugeGraph maturity assessment

+weight: 50

+---

+

+# Maturity Assessment for Apache HugeGraph

The goals of this maturity model are to describe how Apache projects operate in a concise and high-level way, and to provide a basic framework that projects may choose to use to evaluate themselves.

@@ -14,37 +20,37 @@ The following table is filled according to the [Apache Maturity Model](https://c

### CODE

-| **ID** | **Description** | **Status** |

-| -------- | ----- | ---------- |

+| **ID** | **Description** | **Status** |

+| -------- | --------------- | ---------- |

| **CD10** | The project produces Open Source software for distribution to the public, at no charge. | **YES** The project source code is licensed under the `Apache License 2.0`. |

-| **CD20** | Anyone can easily discover and access the project's code.. | **YES** The [official website](https://hugegraph.apache.org/) includes a link to the [GitHub repository](https://github.com/apache/hugegraph). |

+| **CD20** | Anyone can easily discover and access the project's code. | **YES** The [official website](https://hugegraph.apache.org/) includes a link to the [GitHub repository](https://github.com/apache/hugegraph). |

| **CD30** | Anyone using standard, widely-available tools, can build the code in a reproducible way. | **YES** Apache HugeGraph provides a [Quick Start](https://hugegraph.apache.org/docs/quickstart/hugegraph/hugegraph-server/) document that explains how to compile the source code. |

-| **CD40** | The full history of the project's code is available via a source code control system, in a way that allows anyone to recreate any released version. _ | **YES** The project uses Git, and anyone can view the full history of the project via commit logs and tags for each release. |

+| **CD40** | The full history of the project's code is available via a source code control system, in a way that allows anyone to recreate any released version. | **YES** The project uses Git, and anyone can view the full history of the project via commit logs and tags for each release. |

| **CD50** | The source code control system establishes the provenance of each line of code in a reliable way, based on strong authentication of the committer. When third parties contribute code, commit messages provide reliable information about the code provenance. | **YES** The project uses GitHub managed by Apache Infra, ensuring provenance of each line of code to a committer. Third-party contributions are accepted via pull requests in accordance with the [Contribution Guidelines](https://hugegraph.apache.org/docs/contribution-guidelines/).|

### LICENSE

-| **ID** | **Description** | **Status** |

+| **ID** | **Description** | **Status** |

| -------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------- |