diff --git a/AbhishekBhosale_C#/AbhishekBhosale_Objectdetection_OpenCV.ipynb b/AbhishekBhosale_C#/AbhishekBhosale_Objectdetection_OpenCV.ipynb

new file mode 100644

index 000000000..0d7d0433c

--- /dev/null

+++ b/AbhishekBhosale_C#/AbhishekBhosale_Objectdetection_OpenCV.ipynb

@@ -0,0 +1,276 @@

+{

+ "cells": [

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "# Imports"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 1,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "import numpy as np\n",

+ "import cv2 as cv"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "# Loading the Haar Cascade Classifier (xml) files and images"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 9,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# Loads all the classifiers\n",

+ "face_cascade = cv.CascadeClassifier('haarcascade_frontalface_default.xml')\n",

+ "eye_cascade = cv.CascadeClassifier('haarcascade_eye.xml')\n",

+ "fullbody_cascade = cv.CascadeClassifier('haarcascade_fullbody.xml')\n",

+ "cars_cascade = cv.CascadeClassifier('cars.xml')\n",

+ "bus_cascade = cv.CascadeClassifier('bus.xml')\n",

+ "\n",

+ "# Loads all the images\n",

+ "img = cv.imread('face.jpg')\n",

+ "body = cv.imread('body1.jpg')\n",

+ "car = cv.imread('cars1.jpeg')"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "# Defining object detection function"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 3,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "def object_detection(image, cascade, text, color = (255, 0, 0)):\n",

+ " \n",

+ " # Our operations on the frame come here\n",

+ " gray = cv.cvtColor(image, cv.COLOR_BGR2GRAY)\n",

+ " objects = cascade.detectMultiScale(gray, 1.3, 5)\n",

+ " for (x, y, w, h) in objects:\n",

+ " \n",

+ " # Draws rectangle around the objects\n",

+ " image = cv.rectangle(image, (x,y), (x+w , y+h), color, 2)\n",

+ " \n",

+ " # Display text on top of rectangle\n",

+ " cv.putText(image, text, (x, y-10), cv.FONT_HERSHEY_SIMPLEX, 0.9, color, 2)\n",

+ " \n",

+ " # Display the resulting frame\n",

+ " cv.imshow('img', image)"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "## Face detection and eye detection"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 4,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# Detects the faces\n",

+ "object_detection(img, face_cascade, 'face')\n",

+ "cv.waitKey(0)\n",

+ "# Closes the image window\n",

+ "cv.destroyAllWindows()\n",

+ "\n",

+ "# Detects the eyes\n",

+ "object_detection(img, eye_cascade, 'eye')\n",

+ "cv.waitKey(0)\n",

+ "# Closes the image window\n",

+ "cv.destroyAllWindows()"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 5,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "cap = cv.VideoCapture(0)\n",

+ "\n",

+ "while(True):\n",

+ " # Capture frame-by-frame\n",

+ " ret, frame = cap.read()\n",

+ "\n",

+ " # Our operations on the frame come here\n",

+ "# gray = cv.cvtColor(frame, cv.COLOR_BGR2GRAY)\n",

+ " \n",

+ "# faces = face_cascade.detectMultiScale(gray, 1.3, 5)\n",

+ "# for (x, y, w, h) in faces:\n",

+ "# frame = cv.rectangle(frame, (x,y), (x+w , y+h), (255, 0, 0), 2)\n",

+ "# roi_gray = gray[y:y+h, x:x+w]\n",

+ "# roi_color = frame[y:y+h, x:x+w]\n",

+ "# eyes = eye_cascade.detectMultiScale(roi_gray)\n",

+ "# for (ex, ey, ew, eh) in eyes:\n",

+ "# cv.rectangle(roi_color, (ex,ey), (ex+ew , ey+eh), (0, 255, 0), 2)\n",

+ "\n",

+ "# # Display the resulting frame\n",

+ "# cv.imshow('frame', frame)\n",

+ "\n",

+ " object_detection(frame, face_cascade, 'face')\n",

+ " # Waits for Q key to quit\n",

+ " if cv.waitKey(1) & 0xFF == ord('q'):\n",

+ " break\n",

+ "\n",

+ "# When everything done, release the capture\n",

+ "cap.release()\n",

+ "cv.destroyAllWindows()"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "## Car detection"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 6,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# Loads the video\n",

+ "cap = cv.VideoCapture('cars.avi')\n",

+ "\n",

+ "while(True):\n",

+ " \n",

+ " # Capture frame-by-frame\n",

+ " ret, frame = cap.read()\n",

+ " \n",

+ " # Breaks at the end of video\n",

+ " if (type(frame) == type(None)):\n",

+ " break\n",

+ " \n",

+ " # Calls the object detection function\n",

+ " object_detection(frame, cars_cascade, 'car')\n",

+ " \n",

+ " if cv.waitKey(1) & 0xFF == ord('q'):\n",

+ " break\n",

+ "\n",

+ "# When everything done, release the capture\n",

+ "cap.release()\n",

+ "cv.destroyAllWindows()"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "## Human detection"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 7,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# Loads the video\n",

+ "cap = cv.VideoCapture('pedestrians.avi')\n",

+ "\n",

+ "while(True):\n",

+ " \n",

+ " # Capture frame-by-frame\n",

+ " ret, frame = cap.read()\n",

+ " \n",

+ " # Breaks at the end of video\n",

+ " if (type(frame) == type(None)):\n",

+ " break\n",

+ " \n",

+ " # Calls the object detection function\n",

+ " object_detection(frame, fullbody_cascade, 'human')\n",

+ " \n",

+ " if cv.waitKey(1) & 0xFF == ord('q'):\n",

+ " break\n",

+ "\n",

+ "# When everything done, release the capture\n",

+ "cap.release()\n",

+ "cv.destroyAllWindows()"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "metadata": {},

+ "source": [

+ "## Bus detection"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": 8,

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# Loads the video\n",

+ "cap = cv.VideoCapture('bus1.mp4')\n",

+ "\n",

+ "while(True):\n",

+ " \n",

+ " # Capture frame-by-frame\n",

+ " ret, frame = cap.read()\n",

+ " \n",

+ " # Breaks at the end of video\n",

+ " if (type(frame) == type(None)):\n",

+ " break\n",

+ " \n",

+ " # Calls the object detection function\n",

+ " object_detection(frame, bus_cascade, 'bus')\n",

+ " \n",

+ " if cv.waitKey(1) & 0xFF == ord('q'):\n",

+ " break\n",

+ "\n",

+ "# When everything done, release the capture\n",

+ "cap.release()\n",

+ "cv.destroyAllWindows()"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "metadata": {},

+ "outputs": [],

+ "source": []

+ }

+ ],

+ "metadata": {

+ "kernelspec": {

+ "display_name": "Python 3",

+ "language": "python",

+ "name": "python3"

+ },

+ "language_info": {

+ "codemirror_mode": {

+ "name": "ipython",

+ "version": 3

+ },

+ "file_extension": ".py",

+ "mimetype": "text/x-python",

+ "name": "python",

+ "nbconvert_exporter": "python",

+ "pygments_lexer": "ipython3",

+ "version": "3.7.6"

+ }

+ },

+ "nbformat": 4,

+ "nbformat_minor": 4

+}

diff --git a/AbhishekBhosale_C#/AbhishekBhosale_Objectdetection_OpenCV.md b/AbhishekBhosale_C#/AbhishekBhosale_Objectdetection_OpenCV.md

new file mode 100644

index 000000000..491d86065

--- /dev/null

+++ b/AbhishekBhosale_C#/AbhishekBhosale_Objectdetection_OpenCV.md

@@ -0,0 +1,40 @@

+# Created by Abhishek

+

+## Object Detection

+

+In this page for Object Detection we will be using Haar feature-based Cascade classifiers.

+

+**What is that?**

+

+It is an effective object detection method proposed by Paul Viola and Michael Jones in their paper, “Rapid Object Detection using a Boosted Cascade of Simple Features” in 2001. It is a machine learning based approach where a cascade function is trained from a lot of positive and negative images. It is then used to detect objects in other images.

+

+Initially, the algorithim need a lot of positive images and negative images to train the classifier. Then we need to extract features from it so for this we use haar features as shown the image below.Now all possible sizes and locations of each kernel s used to calculate plenty of features. But among all these features we calculated, most of them are irrelevant.For this, we apply each and every feature on all the training images. For each feature, it finds the best threshold which will classify the faces to positive and negative. But obviously, there will be errors or misclassifications. We select the features with minimum error rate, which means they are the features that best classifies the face and non-face images.Final classifier is a weighted sum of these weak classifiers. It is called weak because it alone can’t classify the image, but together with others forms a strong classifier. The paper says even 200 features provide detection with 95% accuracy. Their final setup had around 6000 features.

+

+

+

+In an image, most of the image region is non-face region. So it is a better idea to have a simple method to check if a window is not a face region. If it is not, discard it in a single shot. Don’t process it again. Instead focus on region where there can be a face. This way, we can find more time to check a possible face region.

+

+For this they introduced the concept of Cascade of Classifiers. Instead of applying all the 6000 features on a window, group the features into different stages of classifiers and apply one-by-one.If a window fails the first stage, discard it. We don’t consider remaining features on it. If it passes, apply the second stage of features and continue the process. The window which passes all stages is a face region.

+

+So this is a simple intuitive explanation of how Viola-Jones face detection works.

+

+---

+

+**Now lets take an example like face detection and eye detection and see what are the process that needs to be done.**

+

+* A image when we import using OpenCV it is of the color format BGR. So we need to change it to grayscale to apply the Haar Cascade Classifier.

+* Now to find the faces we use the **detectMultiScale()** function and it returns values of the format **(x, y, w, h)** where x is the starting x-coordinate, y is the starting y-coordinate and w is the width and h is the height.

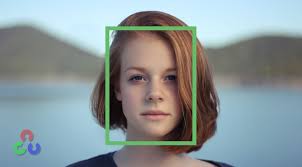

+* Then to make it visible on the photo we use **rectangle()** function and draw rectangle around it like the below picture.

+

+* Now we can jus take out the part of the image of the face using **roi()** funtion and then apply the eye cascade classifier for this part by repeating the above steps.

+

+

+**We can also apply this classifier for a video as well.**

+

+The steps are as follows:

+* First the video capture needs to be turned on using **VideoCapture()** function.

+* Next the current frame needs to extracted using **read** function.

+* Now we need to do the same process as we did for an image.

+

+

+The full code is [here](https://github.com/Abhixtrent28/Open-contributions/blob/2082bdc61628061754183107be7baf459e1deb62/AbhishekBhosale_C%23/AbhishekBhosale_Objectdetection_OpenCV.ipynb)

diff --git a/AbhishekBhosale_C#/Markdown.md b/AbhishekBhosale_C#/Markdown.md

new file mode 100644

index 000000000..2a7c3fef8

--- /dev/null

+++ b/AbhishekBhosale_C#/Markdown.md

@@ -0,0 +1,286 @@

+# Introduction to Markdown

+

+Markdown is a lightweight markup language with plain text formatting syntax. What this means to you is that by using just a few extra symbols in your text, Markdown helps you create a document with an explicit structure.

+

+## Why Markdown

+

+* **Easy** The syntax is very simple.

+

+* **Fast:** It speeds up the workflows.

+

+* **Clean:** No missing closing tags, no improperly nested tags, no blocks left without containers.

+

+* **Portable:** Cross-platform by nature.

+

+* **Flexible:** Output documents to a wide array of formats like, convert to HTML , rich text for sending emails or any number of other proprietary formats.

+

+

+> We will be using markdown in almost every module's assignment, so it is an important thing to learn here if you don't know already.

+

+## Basic working in Markdown

+

+### 1. Heading

+

+Use # for headings. You can use this like in HTML for H1, H2 etc.

+

+

+

+**Input:**

+

+

+

+```md

+

+# H1

+## H2

+### H3

+.

+.

+###### H6

+```

+

+**Output:**

+

+# H1

+## H2

+### H3

+.

+

+.

+###### H6

+

+

+

+### 2. Bold,Italics and striking words

+

+Use `**` for making text bold and `*` for italics.

+

+> You are required to close the tag here.

+

+**Input:**

+

+```md

+

+**Bold**

+*Italics*

+***Bold and Italics Both***

+~~this is the striking one~~

+```

+

+**Output:**

+

+**Bold**

+

+*Italics*

+

+***Bold and Italics Both***

+

+~~this is the striking one~~

+

+

+

+

+### 3. Lists

+

+Use `*` from new line for unordered list, `1.` for order.

+

+> You can also use these with proper indentation.

+

+**Input:**

+

+```md

+

+* Unordered

+

+1. Ordered

+

+* Nested

+ 1. ordered inside.

+ * Unordered inside

+

+```

+

+**Output:**

+

+

+* Unordered

+

+1. Ordered

+

+* Nested

+ 1. ordered inside.

+ * Unordered inside

+

+

+

+

+

+### 4. Hyperlinks and images

+

+Use `[text](url)` for hyperlinks and, `` for image.

+

+> You can also use image links by nesting these both.

+

+**Input:**

+

+```md

+

+[Devincept Website](https://devincept.tech/)

+

+

+

+[](https://devincept.tech/)

+

+```

+

+**Output:**

+

+[Devincept Website](https://devincept.tech/)

+

+

+

+[](https://devincept.tech/)

+

+

+

+

+

+### 5. Coding and notes

+

+* Use ` for liner codes or highlight.

+* Use ``` for multiline code.

+* Use > to give a note.

+

+> You can also use language of code to make the multiline code more realistic, exp: ```python

+

+**Input:**

+

+```md

+ `simple code or highlight`

+

+ > Give a note like this

+

+ ```python

+ #simple python multiline code

+ a=input()

+ c=a

+ print (c)

+ ```

+```

+

+

+

+**Output:**

+

+`simple code or highlight`

+

+

+> Give a note like this

+

+

+```python

+#simple python multiline code

+a=input()

+c=a

+print (c)

+```

+

+

+

+

+### 6. Table

+

+Till now we got to know some important

+

+

+**Input:**

+

+```md

+

+|Heading 1|Heading 2| Heading 3|

+|---------|---------|----------|

+| Data | Data | Data |

+| Data | Data | Data |

+

+```

+

+**Output:**

+

+|Heading 1|Heading 2| Heading 3|

+|---------|---------|----------|

+| Data | Data | Data |

+| Data | Data | Data |

+

+

+

+### 7. The power of HTML

+

+We have seen the basic features of markdown till now, but superpower of markdown is, You can directly use HTML in it.

+

+> Some times it becomes a little difficult to do some complex things in markdown, so using HTML that time can make it work.

+

+**Input:**

+

+

+```md

+Lists or table inside the table can be implemented using HTML

+

+|Heading 1|Heading 2| Heading 3 |

+|---------|---------|----------------------------------------------------------------------------|

+| Data | Data | Data |

+| Data | Data | |

+

+```

+

+**Output:**

+

+|Heading 1|Heading 2| Heading 3|

+|---------|---------|----------|

+| Data | Data | Data |

+| Data | Data | |

+

+

+

+

+### 7. Emojis to make it :heart:

+

+Use emojis in your repository's Readme to make it Awesome :exclamation::exclamation::exclamation:

+

+**Input:**

+

+

+```md

+Use anywhere just like

+

+:bowtie: :blush: :smiley: :relaxed:

+

+:smile: :smirk: :heart_eyes: :kissing_heart:

+

+:heart: :kissing_closed_eyes: :flushed: :relieved:

+

+```

+

+**Output:**

+

+:bowtie: :blush: :smiley: :relaxed:

+

+:smile: :smirk: :heart_eyes: :kissing_heart:

+

+:heart: :kissing_closed_eyes: :flushed: :relieved:

+

+> To get the complete list of github markdown emoji markup [click here](https://gist.github.com/rxaviers/7360908)

+

+

+

+

+

+These were the most used Markdown features. These will help you for you assignment in this module as well as in further program, and without markdown your repository on github is just a code-file, start using it now :wink:

+

+

+

+

+

+

+

+Thanks for Reading!!!

+